In this sample chapter from Zero Trust Architecture, uncover the foundations of Zero Trust strategy with insights into its five pillars: policy overlay, identity-centric approach, vulnerability management, access control, and visibility. Learn to identify critical capabilities, establish a solid foundation, and define risk tolerance. The authors offer a comprehensive guide for implementing Zero Trust in your organization.

Chapter Key Points

This chapter provides an overview of the five pillars of Zero Trust, including how to overlay policy, being identity-led, providing vulnerability management, enforcing access control, and providing visibility into control and data plane functions.

We provide ways to identify what Cisco defines as Zero Trust Capabilities and where to start looking in the organization for these capabilities.

We also provide an extensive reference, or “dictionary of capabilities,” that can be used for many efforts within an organization.

Capabilities outlined in this chapter may be broken down further, but for the purposes of achieving Zero Trust, the book focuses on the critical capabilities needed.

We establish a foundation to build Zero Trust into an organization.

The cornerstone to creating a Zero Trust strategy is to identify the capabilities of an organization using a focused process to identify how well a capability is addressed by reviewing technical administration capabilities, functional cross-organizational process capabilities, and overall adoption of the capabilities.

By reading and referring to this chapter of the book, you will be able to identify what Cisco defines as Zero Trust Capabilities as well as where to start looking within an organization for these capabilities. The organization will need to review its requirements related to policy creation and fulfillment, along with what is deemed critical infrastructure, to define the overall risk tolerance for issues or gaps.

After a risk tolerance level is established for the organization, an assessment of the available capabilities should be performed. Risk assessments are often performed by an outside organization to remove critical biases and to enable all parts of the organization to consume the findings of the assessment. Priorities and gaps that are identified should establish a strategy for going forward and a roadmap for a Zero Trust–driven organization.

Following chapters in this book outline use cases, methods, and best practices to implement Zero Trust, as outlined in this critical foundational chapter.

Cisco Zero Trust Capabilities

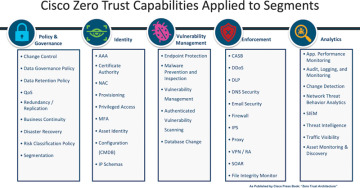

The pillars of the Cisco Zero Trust Capabilities, as outlined in Figure 2-1, represent various capabilities that are necessary for a successful Zero Trust strategy. These capabilities are not all inclusive but function as the minimum required set of capabilities necessary. Some organizations may need more specific capabilities relevant to their unique use cases.

Figure 2-1 Cisco Zero Trust Capabilities

This chapter develops your understanding of each capability and what that capability can be used for within an organization to move toward developing a stronger security posture against would-be attackers. We begin with the Policy & Governance pillar because it establishes what can or cannot be done within the organization. We then move to the Identity pillar, which establishes the identity of not only users but also devices, transport, and many other object types. It cannot be understated how important Identity is to establish a stronger security posture.

The Vulnerability Management pillar enables organizations to identify, track, and mitigate known vulnerabilities to reduce organizational risk. The Enforcement pillar capabilities are what traditionally are thought to be security operations center (SOC) or network operations center (NOC) tools; however, as the team reviews these capabilities regarding Zero Trust, you will see that these capabilities extend beyond these groups and are used or managed by multiple teams throughout the organization. In the Analytics pillar, we review how an organization can see what is happening to objects and what is acting upon them inside and outside of the environment.

Having well-established governance, identity stores, vulnerability management, enforcement, and visibility capabilities enables a Zero Trust strategy.

Policy & Governance Pillar

Finding the right balance of security and business enablement is a crucial requirement for any Zero Trust strategy. The primary category to help achieve this balance is the Policy & Governance pillar of Cisco’s Zero Trust Model. With the Policy & Governance pillar, an organization may establish how tightly the entire organization is governed, how long information is retained, how the organization will recover in an emergency, and how important sets of data are managed from group to group. Organizations also need to focus on their industry, their regulations, the organization and its business goals, and their customers’ risk tolerance levels. The Policy & Governance pillar focuses on key factors that must be established to enable the Zero Trust journey.

Change Control

It is necessary for many organizations to have change management services. Many use the Information Technology Infrastructure Library (ITIL) change management process. ITIL is an accepted approach to managing Information Technology services to support and enable organizations. ITIL enables organizations to deliver services. Frameworks such as ITIL help establish architectures, processes, tools, metrics, documentation, technology services, and configuration management practices.

Changes must be coordinated, managed, and details disseminated to relevant parties. A unique characteristic of Zero Trust means that changes will occur end to end within the environment, so special care must be paid to ensure smooth forward progress. As a critical part of the change process, testing provides the ability to ensure that production deployments in support of Zero Trust can be accomplished in a timely and effective fashion.

Data Governance

It’s critical to classify data and to understand where it is stored and how it is monitored for compliance to organizational policies. Some examples of data classifications are personally identifiable information (PII), Electronic Protected Health Information (ePHI), Payment Card Information (PCI), Restricted Intellectual Property, and Classified Information. Data governance also includes a well-defined and maintained configuration management database that contains where all data stores are located, who owns them within the organization, along with data classification, labeling and storage, and access requirements.

Data Retention

Data retention is dictated based on organizational and regulatory requirements. After an incident, the ability to determine the cause of an outage or breach is critical information that must be retained both for restoration of service as well as audit purposes. Data retention must consider data at rest, how long the data must be stored, and when the data should be purged to limit organizational liability. The legal and compliance teams of the organization manage policy requirements on what data an organization must retain and for how long.

Quality of Service (QoS)

Quality of service, including the marking and prioritization of key traffic in times of micro or long-term congestion, is a key component of availability to ensure that control plane traffic continues to flow to ensure Zero Trust capabilities function as intended. QoS provides for preferential treatment of traffic to meet defined policy requirements to ensure that critical functions necessary for security and business functions continue without undue impairment. Without this safeguard in place, organizations run the risk of congestion on the network having unpredictable impacts to traffic and the solutions that rely on that traffic.

Redundancy

Redundancy is necessary to maintain availability and is part of a Zero Trust strategy. Critical components of the ecosystem are required to be duplicated by many frameworks, standards, regulations, and laws. Redundancy can have multiple aspects: control plane redundancy is necessary for the functioning of capabilities, whereas data plane redundancy is necessary to ensure that business functions continue unimpeded.

Replication

Replication involves the duplication and the encryption of key data stores to backup storage arrays and offline storage backups, which provide a restoration path in the event of partial or complete loss of an environment due to ransomware. Software automation is necessary to ensure that the proper environments are replicated to proper locations. Without replication automation, errors are inevitable, and critical data stores may be overwritten, creating a large-scale outage requiring full restoration of one or many databases.

Auditors, regulators, or governing bodies routinely validate these controls. The key point to note is the regulations, standards, or laws are the minimum of replication that should be in place for the organization. Protecting an organization’s data is its top concern. Without protective controls—that is, encryption and locations around these replicated data stores—there can be no data integrity, confidentiality, and in the end availability; therefore, a gap in Zero Trust is created.

Business Continuity

Confidentiality, integrity, and availability are the foundation of all security programs and are necessary in a Zero Trust strategy. Business continuity relies on a well-executed Zero Trust strategy. The development of business continuity teams and business continuity documentation that can be accessed by the critical teams in the event of a crisis is a cornerstone to business continuity. Please note that a business continuity plan (BCP) should always be protective of human life first, in all cases. Ensure teams are safe at the outset of plan activation and throughout the event. A well-developed communication plan will assist in locating and checking in on those associated with the organization. The plan should also be protective of what is shared publicly to provide a level of protection to recovery efforts.

The second most important step is maintaining the integrity of data in the middle of responding to a business continuity event. Maintaining data integrity may seem trivial to some of those responding to a critical event, but that is exactly when an attacker will attack. Ensure “temporary” controls or measures do not expose the organization to issues with data integrity along with availability. Some ransomware attacks may activate the business continuity plan and be the cause of an organization-level outage. Restricted or intellectual property may be at risk.

Work out these scenarios in advance and partner with your nearest fusion center and other government entities to respond to these critical types of events. Tabletop exercises may expose gaps, but putting teams through BCP drills reveals how prepared teams are to respond and may uncover shortcuts that could expose the organization’s critical data stores.

Disaster Recovery (DR)

Typically, a disaster recovery event is activated as soon as a problem has been detected, but many times the business continuity plan (BCP) should be activated. After the BCP team assesses the situation, recovery efforts are officially started. The DR plan may include many of the same contacts from a leadership perspective as the BCP does, but the DR plan focuses on recovering a solution, a set of solutions, or the critical infrastructure of the organization.

The scope of any DR event may be assessed and categorized as minor, or it could go more broadly. At first, the event may impact only one aspect of the business or even one solution, but this is where teams should not have tunnel vision and should consider other systems and environments that could be also impacted. Activating the proper process and notifying the right level of leadership is a function of the business continuity plan based on impact and risk. It is important that proper criteria have been established for DR planning, primarily the criticality of the system to the organization and impact upon daily functioning and therefore the acceptable limits of data loss and recovery time. This is normally classified into two categories, recovery point objective (RPO) and recovery time objective (RTO). RPO defines the amount of time acceptable for transactional data loss. Stated another way, RPO is the amount of data or work that will be unrecoverable after a system failure. RTO, on the other hand, is the amount of time it takes to restore the system and data back to normal. These are minimum variables that should be defined for each system where it is determined that DR capability is required.

DR plans go hand in hand with the business continuity plan. With proper controls as defined in the “Policy & Governance” section of the book, disaster recovery should be achievable and complete. Development and testing of a DR plan are part of the standup procedures for new environments. Each environment must define a method of recovery prior to “production go-live” events so the definition of what constitutes a successful recovery can be worked through and can be checked off as complete during an actual DR event or during a DR test. If the plan is not created after the application has been purchased, many installation requirements are forgotten, neglected, or known by only a handful of individual team members. Testing of the DR plan is required for both new and old ecosystems. The adage still holds true: “If there is no testing, there is no DR plan.” Adding to that, without a business continuity plan and a disaster recovery plan, there cannot be a valid and implemented Zero Trust strategy.

Risk Classification

Risk classification helps inform multiple other capabilities such as data governance, business continuity, and redundancy. This includes classifying the risk for data as well as capabilities. For data, risk must be assessed to understand the criticality of the data to the organization. For capabilities, risk must be classified to understand what impact to the organization may occur if that capability ceases to operate as expected.

Risk classification structures should be developed with compliance and legal teams to ensure that the business is protected and to ensure business continuity. Having a Zero Trust mindset as these classifications are developed or updated will go a long way to provide greater protections and controls put in place, while at the same time enabling the business.

Identity Pillar

Identity is a concept to represent entities that exist on a network and is analogous to what an entity has or is. Sometimes, entities may offer a configured or known credential, while other times they do not. Identity alone is not enough to gain access to data. Determining identity is a fundamental process of authentication. Organizations using an identity alone as a basis to grant access to an object from a central authority are not aligning to a full Zero Trust strategy, because full context of the identity has not been established. As an example, possessing a driver’s license as identification does not allow anyone on an airplane. Someone or something must verify the identification matches the entity attempting to use the license against valid confirmation information.

Authentication, Authorization, and Accounting (AAA)

What is meant by the phrase “Triple A”? In simple terms, authentication is a validation of the “who” or “what” of an entity, authorization is the set of resources or data to which the authenticated entity can access, and accounting is the record of interactions that occur throughout the operation.

The first step for any entity accessing the network is to authenticate. This step requires that the entity requesting authentication—be it a person, computer, or any number of other networked devices—must provide details about itself in at least one form. These details may be provided directly by the entity, for example, using a username and password, a certificate, or a MAC address provided by the entity.

Authentication can be accomplished using multiple criteria, which is referred to as multifactor authentication. The process of authenticating does not imply the permissions to which the entity may interact. Take, for example, an ATM: anyone can walk up to one with a valid debit card and insert it into the machine. With the proper PIN code, the user will authenticate, but the possession of the card and PIN code does not explicitly provide details to which accounts that person should have access, which leads to the second “A,” authorization.

Authorization involves taking the identity of the authenticated entity and, in combination with other conditions, determining through a defined policy what level of resource or data access should be provided. Depending on the policy engine in use, these conditions can become quite granular. Some examples of additional conditions for authorizing network access might include device health or posture, a directory service group membership, time and day variables, device identity, or device ownership. Going back to the ATM example, after authentication, the customer is provided access to their accounts after a policy engine makes the necessary determinations, such as permission to view and interactions allowed with each account.

Finally, accounting is a way to record the actions an entity on the network takes for audit purposes. This includes documenting when the entity attempts to authenticate, the result of that authentication, and what interactions are made with the authorized resources and ends after the entity disconnects or logs off from the network. This accounting data is crucial for both troubleshooting and forensic purposes. In troubleshooting, it provides valuable data to help identify where in the process of AAA the entity is encountering a problem, such as why they are not getting authorized to expected data or resources. For forensic purposes, it provides the ability to understand when an entity accessed the network, what actions were taken, and when it disconnected or if it is still connected to the network.

AAA Special Conditions

It is also important to mention the challenges for AAA brought about by the rapid increase in Internet of Things (IoT) devices. In most cases, these devices operate in a more rudimentary fashion when it comes to network connectivity and may not be capable of providing a username and password for authentication, much less a certificate. In some cases, while these capabilities may be available from the device, a lack of suitable management may make use of these features not technically feasible. In either case, it is important to ensure that alternatives are available to authenticate and authorize these devices effectively and safely. Commonly, this will mean using the MAC address to authenticate the device against a database, and authorization will follow a similar set of conditions as for other entities. There are numerous efforts underway to improve the interaction of IoT devices, especially regarding enterprise networks, such as the Machine Usage Description (MUD) attribute, which provides the purpose of the device to the policy engine. Ultimately, though, these devices can be more easily spoofed when authenticated through MUD or MAC address-based paths, so caution must be taken. This lower level of confidence in positive identification and authenticating the entity in detail means special thought and care must be taken when assigning authorization to resources or data.

Certificate Authority

An alternative but slightly higher overhead for identifying devices uniquely within a network is the ability to present a certificate. A certificate, simply put, is a unique identity issued to a user or endpoint, which relies on a chain of trust. This chain of trust consists of a centralized authority being the root of the trust, and branches in a tree-like structure providing for distributed trust the world over. Issuance of a certificate to endpoints or to users provides for an “I trust this authority, and therefore I trust this entity” ability.

Certificates are typically considered a stronger method of authentication because of the ability to both prevent exportation of the identity and providing for the ability to validate the identity presented within the certificate against a centralized identity store—for example, Active Directory, which is the Microsoft Directory Store; Lightweight Directory Access Protocol (LDAP), which is an Open-Source Directory Store; or Azure Active Directory Domain Services (Azure AD DS), which is cloud-based.

By blocking the private aspects of the certificate from being exported, the certificate cannot be shared with another user or even another device, making it a secure identification mechanism. In addition, like directory service attributes, alternative names and attributes can be added into a certificate that can be used to uniquely identify an endpoint and what access the device should be provided on the network.

Certificates are typically exchanged with the policy engine via Extensible Authentication Protocol–Transport Layer Security (EAP-TLS). These certificates can be assigned to either the endpoint or the user itself. The combination of user and machine certificates creates a unique contextual identity. This contextual identity provides differentiated access based on the attributes associated to the type of identity, whether that be user, application, or machine.

Network Access Control (NAC)

A network access control system provides a mechanism to control access to the network. There are many solutions available to provide this Zero Trust Capability to maintain control of who or what accesses the network for any organization. The NAC system needs to have the ability to integrate with the other Zero Trust Capabilities, described within this chapter. The NAC system will directly participate in the Policy & Governance, Identity, Vulnerability Management, Enforcement, and Analytics pillars. Policy & Governance must influence the configuration of the NAC system.

After a device is purchased, onboarded, and identified, there needs to exist a database and policy engine to validate the identity using AAA (see the previous section). This policy engine should contain

Integrated authentication into a directory service

Endpoint posture for vulnerabilities

Ability to control endpoint access via policy

For example, with identity, there is a reliance upon Directory Services, or a certificate authority, which requires that the NAC system integrate with the identity store to determine and enforce AAA. NAC should utilize this identity to link vulnerability into the contextual identity, then apply and enforce controls, and then log these actions locally or to an integrated system, such as a Security Information and Event Management (SIEM) system. Logging events being generated in the NAC system requires collection of what was done and why to be able to better analyze devices on the network and their potential security implications to the network.

Provisioning

Provisioning is a process to acquire, deploy, and configure new or existing infrastructure throughout an organization based on Policy & Governance. Provisioning heavily impacts the decision-making process when implementing a Zero Trust strategy. Provisioning happens in multiple phases across multiple groups in the environment. All stakeholders must understand the importance of a unified policy and process.

Organizations define their own needs to meet specific requirements. A comprehensive Zero Trust strategy requires a wholistic approach that addresses the flexibility needed in the process and while maintaining tight controls that enforce the policies of the organization and regulating bodies. Proper provisioning practices dictate that a common form of tracking and visibility of access needs should be documented during all stages of the infrastructure life cycle. The following sections detail some Provisioning policy enforcement categories to consider.

Device

Some common device types range from printers, computers, IoT, OT, specialty equipment, and managed, and not managed. Groups responsible for creating, maintaining, and executing these functions exist in almost every facet of an organization. Devices need to respect the presence of Zero Trust controls in any physical, logical, or network environment.

User

Users can exist in many parts of the organization but, unlike devices, should all be controlled within a defined role within the organization. User identities created for third parties must map to a role with the organization. Access for devices, people, and processes relies on these role-based user accounts. These accounts may represent multiple roles for differing functions. Zero Trust relies upon user identity, which is an important attribute in aligning policy to an action. “User” is a component of the Zero Trust Identity Capability for user attribution, assignment, and provisioning and builds a foundation for establishing trust.

People

A Zero Trust strategy should inform and guide all onboarding and offboarding processes within an organization of each entity. People have the potential of becoming soft targets and therefore vulnerabilities to the organization. Security threat awareness, training, and testing help build resilience within the people who work for the organization. The scope of provisioning as it relates to people applies not only to those with access to systems. Provisioning of users, devices, access, services, assets, and many other important aspects of provisioning are affected through these processes. Zero Trust controls attempt to apply attribution to any interaction with people throughout the organization, third parties, or partners. These concepts can branch out to encompass interactions with any asset by a person to any connection.

Infrastructure

The Identity of infrastructure objects defines what an object is, what an object needs to function, and relates the object to what are valid activities of the object to support the organization.

Infrastructure provisioning processes create the pathways through which access to objects occurs. Administrators need to define what protections are needed to enable the use of the infrastructure to support the user community and the functions of their role. Administrators tasked with supporting the infrastructure mediate how and when provisioning steps interact with services and flows.

Services

Services enable an application or a suite of applications to support and allow users to fulfill their defined user role within the organization. Without services, there is no point in giving a user access to an application. The services attribute for Identity capabilities is used to define access attributes for users to be able to execute critical functions assigned to their roles.

Service Identity provisioning processes interrelate devices, users, people, and infrastructure to further build contextual identity capabilities. Documenting the access requirements and restrictions associated to devices, users, people, and infrastructure creates policy that can be directly enforced by Zero Trust. Services rely on consistent and accurate identity information derived from provisioning to define these policies in an effective manner. Access denial and access acceptance are attained through the documentation of these identifiers and classifying what is allowed to utilize the service and under what conditions the service is being requested.

Privileged Access

Privileged access is elevated user access required to perform functions to support and manage systems. Privileged Access can be found within any portion of the infrastructure, including network appliances, databases, applications, operating systems, cloud provider platforms, communications connectivity systems, and software development. Privileged access should follow the concept of “least privileged access” and should be limited to a very small population of users. Types of users leveraging privileged access include but are not limited to database administrators, backup administrators, third-party application administrators, treasury administrators, service accounts, and systems administrators, along with network and security teams.

Privileged access introduces higher risk to data, availability, or controls. Privileged access may be leveraged by attackers to cause the most damage to an environment, ecosystem, or proprietary information, making this type of Identity what an organization should highly guard, monitor, and control.

To monitor and control privileged access, solutions are available to control this higher level of access, with timers to allow access, and stronger controls, including the logging of changes made while leveraging privileged access levels of Identity. It is recommended that organizations audit the use of privileged access on a routine basis with management oversight and signoff. Many regulations and laws require privileged access controls be put in place within an organization, with demonstrable compliance to external auditors on a routine basis. Teams should review the requirements for their organization based on regulations and legal team guidance.

Multifactor Authentication (MFA)

Multifactor authentication is the practice of leveraging factors of what a user knows (i.e., password), what a user has (i.e., managed device or device certificate), who a user is (i.e., biometrics), and what a user can solve (i.e., Captcha with problems); it is a foundational principle of Zero Trust. These aspects allow for many interpretations, and therefore, the Policy & Governance pillar needs to address the requirements of MFA within a given organization that are pushed out to all users of the environment.

Classical usernames are identifiable through email addresses, and passwords may not be well configured by users or are reused on many systems, making them easily vulnerable to brute-force attacks. By leveraging additional factors of MFA, organizations increase their resistance to attack; however, strong onboarding/offboarding of employees, interns, and contractor processes with monitoring and auditing is required to maintain control of identity stores and MFA factors and to limit unauthorized access.

In some cases, organizations may want to move to a true “passwordless” access control methodology using only device certificates to increase convenience to the user population. It is recommended that organizations review this method with legal teams and regulating bodies prior to moving to a true “passwordless” approach. For example, for most operating systems, after the user logs in to the machine, a supplicant is presented a certificate as an authentication mechanism to a policy engine. Are a user login and a device certificate enough for the organization and the regulations with which they are required to comply? These challenges to defining MFA may occur, so organizations should be specific on whether MFA is two or more of the same factors or a unique combination of factors. These details need to be specified by the organization via Policy & Governance.

Asset Identity

Asset identity is a method, process, application, or service that enables an organization to identify physical devices that interact with the organization with certainty of the actual real device type, location, and key attributes.

Organizations need to be able to identify all unique assets operating within their ecosystems. Based on the identity of the asset, the metadata adds context that will drive Policy & Governance requirements for the asset type involved or the specific asset that is necessary for a Zero Trust strategy implementation. Examples of assets that are critical for identification are not limited to servers, workstations, network gear, telephony devices, printers, security devices, and low-powered devices.

More difficult to identify are assets that include devices that do not respond to requests for unique identity like low-powered devices. These devices may not have a supplicant, or even conform with standardized RFCs dictating the format, frequency, and protocol for responses. In these cases, unique asset attributes need to be used to identify the endpoint. Passive abilities are available to identify an endpoint and have been built into standards used to manufacture devices. The unique MAC address embedded into a device’s network interface card (NIC), for example, has the first 24 of 48 bits reserved to uniquely identify the manufacturer of that endpoint against a known database of registered and reserved organizationally unique identifiers (OUI). The MAC address is a data element in standard configuration management databases.

Configuration Management Database (CMDB)

A configuration management database is an important repository of critical organization information that contains all types of devices, solutions, network equipment, data center equipment, applications, asset owners, application owners, emergency contacts, and the relationships between them all.

Whether the attribute used is the MAC address of an endpoint, a serial number unique to an aspect of the endpoint, or a unique attribute assigned to the endpoint or combination of its properties, a CMDB or an asset management database (AMDB) should exist to ensure that devices, services, applications, and data are tracked and provide critical information to respond to important events or incidents.

The information contained within the CMDB ensures that solutions may reference the data in the CMDB to control access to only authorized objects. Discreet onboarding processes are required to support a Zero Trust strategy. A description of exactly what needs to be known when an endpoint is put onto a network, with roles, responsibilities, and with updating requirements, is part of a mature organization’s Zero Trust profile.

The use of a consistent onboarding process will ensure an optimized and efficient onboarding process can be practiced. This consistent onboarding process ensures that similar provisioning practices are followed across unique vendors, and configurations are applied in a consistent way to identify entities within the network. While variations may occur in devices, even from the same vendor, consistency in identifying the device in alignment with an onboarding process will lead to a notable change in security posture. Critical elements to review when differentiating devices or device types include

Firmware versions

Base software versions

Individual hardware component revisions

Organizational unique identifier (OUI) variation for NICs

Internet Protocol (IP) Schemas

The Internet Protocol schema provides identification of services or objects via unique IP addresses. Necessary to any Zero Trust Segmentation program is having an IP address schema or plan to enable communications from workload to workload, within and outside of an ecosystem. Organizations should not focus specifically on the IP address to create a Zero Trust Segmentation strategy, but rather use an IP schema as another tool in an administrator’s toolbox to assist in identification of workloads and/or objects.

Another consideration is whether an organization should use provider-independent (PI) or provider-aggregated (PA) IP space to improve the security profile, while potentially adding an additional benefit of the organization easily moving from one provider to another.

Most organizations prefer to go with a provider-independent IP space. As stated in the technical paper “Stream: Internet Engineering Task Force (IETF)”:

a common question is whether companies should use Provider-Independent (PI) or Provider-Aggregated (PA) space [RFC7381], but, from a security perspective, there is minor difference. However, one aspect to keep in mind is who has administrative ownership of the address space and who is technically responsible if/when there is a need to enforce restrictions on routability of the space. This typically comes in the form of a need based on malicious criminal activity originating from it. Relying on PA address space may also increase the perceived need for address translation techniques, such as NPTv6; thereby, the complexity of the operations, including the security operations, is augmented.

Best practices to create a stable IP space environment include implementing an addressing plan and an IP address management (IPAM) solution. The following sections detail the three standards of IP addressing spaces that can be used to create or combine to create an IP Schema.

IPV4

Internet Protocol version 4 addresses, better known as IPv4 addresses, enable workloads to communicate over public mediums utilizing a standardized 256-bit addressing standard. It is well known that the world is running out of IPv4 addresses, and this has become a driver for organizations to move to IPv6.

IPV6

IPv6, with its standardized 128-bit address, is expected to be almost inexhaustive with the ability to assign an address to every square inch of the earth’s surface. This direction to implement IPv6 is difficult and should not be entered into without a well-vetted plan. This is further complicated by a need to map out significantly more address space within IPV6, typically a 56- or 64-bit allocation to a given organization, and the flows between endpoints within the address space.

To begin, a directional plan to move to IPv6 has become a legal matter and requirement for some organizations in recent years. Workload communication over IPv6 is becoming necessary, especially when working with public sector agencies. Working on a Zero Trust migration and an IPv6 migration in the same program is a daunting task. A recommendation would be to develop a roadmap to making incremental improvements over time. As part of these incremental improvements, and especially as organizations start to roll out IPv6 greenfield, a mapping of communication for how endpoints interact with each other across their respective communication domains is highly recommended. While most engineers and administrators inherited the design or design standards for IPv4 networks, organizations have a unique opportunity related to IPv6 and its ability to be part of a security strategy.

Each workload that gets an IPv6 address and can communicate over IPv6 also has a unique identity that can be associated back to IPv6. With such as a massive address space available within IPv6, identity can be tied back to the addressing, or at least associated as another tool in the network engineering toolbox.

Dual Stack

In many cases organizations need to use IPv6 address space in a “dual stack” implementation that includes IPv4 addresses, as well as IPv6 addresses to enable a transition. In the case that a transition must be managed as a dual stack, this process requires double the work for administration teams. Implementing dual stack requires that each workload gets an IPv4 and an IPv6 address and can communicate over IPv4 or IPv6. This dual stack process can create a high degree of administrative overhead, including mapping out addresses, designing recognizable subnets or network architectures, and managing network devices by applying the same identity and policies to two separate addresses. Being in this dual phase of implementation tends to go on for several years or is a permanent method to manage the organization’s IP address issues.

Vulnerability Management Pillar

The Vulnerability Management pillar refers to the Zero Trust capability to identify, manage, and mitigate risk within an organization. Effective implementation of vulnerability management requires well-defined Policy & Governance practices that are integrated into the solutions used to manage vulnerabilities. A Vulnerability Management organization needs to be established within the organization using best practices, such as the ones found in the Information Technology Infrastructure Library (ITIL) or those provided as part of the NIST Cybersecurity Framework. Many regulations, laws, and organizational policies rely on effective Vulnerability Management processes to classify known risks, to prioritize these risks for mitigation, to enable leadership to own these known risks, and for response to regulating bodies.

Endpoint Protection

An endpoint protection system not only provides the capability to detect threats such as malware but also provides the ability to determine file reputation, identify and flag known vulnerabilities, prevent the execution of exploits, and integrate behavioral analysis to understand both standard user and machine behavior to flag anomalies. It may also provide some level of machine learning, which can attempt to prevent zero-day malware or other endpoint attacks by monitoring for attributes that are common for malware, relying less on published intelligence data.

Endpoint protection should be able to monitor the system to detect malware and track the origination and propagation of threats throughout the network. Each individual endpoint protection agent has a small view of the environment in which it is connected. However, when data is aggregated between devices and combined with network-level monitoring, it is possible to provide a more complete picture of how a piece of malware enters, propagates, and impacts a network.

Endpoint protection should be able to provide a clear picture as a piece of malware enters and begins to spread through the network. Systems that can run endpoint protection will begin to detect and restrict the actions of the threat, while at the same time beginning to generate alarms. Systems will begin taking retrospective actions to understand where a malicious file originated. This in turn provides the ability to aggregate this data across all the protected endpoints and network monitoring systems, making it possible to illustrate the entry point and impacted systems until its detection.

In other considerations around the endpoint, the protection must extend beyond the endpoint itself. An example of this would be that it is rare to find any enterprise network that does not have Internet of Things (IoT) or operational technology (OT) endpoints. These endpoints may be part of a building management system, such as thermostats or lighting control features, or programmable logic controllers running conveyer systems in a warehouse. The commonality between IoT and OT is that both will be unable to utilize endpoint protection applications, and therefore administrators must rely more heavily on all the other controls available to provide protection. It may be difficult at first to understand how an endpoint protection application on a desktop could help protect a thermostat, but this capability comes down to the forensics being available in these systems.

Malware Prevention and Inspection

Malware is one of the most prevalent threats facing organizations. Due to this widespread usage of malware and its targeting of businesses for monetary gain, organizations cannot solely rely on malware prevention to occur at the endpoint. This is especially true when considering the number of IoT and OT endpoints that cannot run endpoint protection systems. Therefore, it is imperative that malware prevention be layered throughout the ecosystem, deployed on dedicated appliances, or in combination with other security tools. As discussed with endpoint protection, these network-level malware prevention and inspection capabilities must be able to integrate and work in concert with other systems to provide the greatest possible benefit. If the ecosystem can detect malware, it can then communicate this with connected endpoints to alert them of both the presence and type of malware to allow each endpoint to act against the threat. In addition, inspection systems can alert administrators to the threat and begin response efforts if the systems are unable to address the threats automatically.

An additional strength provided by malware prevention and inspection systems is the ability to have a central control point for scanning and blocking of malware. By placing a malware prevention and inspection system prior to a manufacturing network with OT endpoints, for instance, it allows for greater risk mitigation for those business-critical endpoints that are incapable of running their own malware prevention tool sets. As data moves in and out of these segments, malware can be quickly identified, and other connected systems and administrators can begin to take action to remove the threat to keep the organization running. Defense-in-depth means that malware prevention and inspection must occur as often as possible and be well integrated to the overall security ecosystem of an organization to achieve Zero Trust.

Vulnerability Management

Vulnerability management systems fulfill the role of identifying when exploits are possible on a system due to misconfigurations, software bugs, or hardware vulnerabilities. As technology advances in capability, software must become more complex to provide the features that can take advantage of these additional capabilities. At the same time, this software is being developed too quickly to maintain quality, leading to mistakes or oversights, known as bugs or vulnerabilities. From a security viewpoint, there are many instances where these bugs do not pose a problem, but as complexity and the pace of development increase, the quantity of bugs will increase as well, and it is inevitable that some of these bugs will be exploitable. Proactive discovery of these exploits and the ability to remediate before they can be leveraged by an attacker is of paramount importance to protecting an organization. The larger the organization, the greater the importance of a vulnerability management system to allow administrators to quickly ascertain the health of software deployed and identify these exploits as soon as they are made known.

The number of applications that are installed in an organization may not be always known. It is common for the count of applications to be well into the thousands, requiring operations staff to try to identify when each of these applications may be vulnerable to an exploit. Visibility, automation, and AI are required to support and scale vulnerability management teams due to the sheer number of objects within an organization. Vulnerability management systems provide the ability to scan the network and endpoints consistently and reliably against a database of known threats that is continually updated. These systems provide the automation and scale necessary to look across thousands of endpoints and their applications to understand what software is present, the vulnerabilities within that software, and to monitor the remediation efforts as patches or other upgrades are undertaken.

A vulnerability management system should also provide the ability for administrators to quickly understand and prioritize the vulnerabilities present. It is not enough to just rate the threat from a vulnerability based on its impact but should also factor in how often attackers are leveraging the exploit, the level of complexity to exploit, and the number and criticality of the systems that are vulnerable. Zero Trust strategies rely on context for decision-making, and vulnerability management is no different. If the particulars of an organization cannot be factored into the exploit analysis, administrators run the risk of spending precious time remediating exploits that would realistically have minimal to no risk to the organization and delay actions against those threats for which they are truly vulnerable. Some of these lower-risk items may be already appropriately mitigated and should be tracked, along with other mitigated risks, as part of a residual risk database. Residual risk is a method to track any remaining risk after evaluation of security controls and mitigations are completed because it is not possible to completely remove all risk in most scenarios.

Authenticated Vulnerability Scanning

Authenticated vulnerability scanning, where a vulnerability scanner is provided valid credentials to authenticate its access to the target system, is a major component of a well-rounded vulnerability analysis program supporting a Zero Trust strategy. On its face, vulnerability scanning seems logical: scan the network and look for known vulnerabilities that could be exploited so that the organization has visibility into what should be fixed. Authenticated vulnerability scans, though, are a bit less obvious to some, with frequently posed questions like Why should I bypass security I already have in place? Or does it really matter if there is a vulnerability where I have security mitigations like multifactor authentication in front of my application? It’s important though to separate the concept of authenticated vulnerability scanning from penetration testing. For the latter, allowing access through authentication controls would defeat the purpose, but the goal of authenticated vulnerability scanning is to gain better visibility into an organization’s current level of risk. Authenticated vulnerability scanning is just another layer of a defense-in-depth strategy that allows a closer look at the vulnerabilities in an application that may otherwise be protected only by a username and password. Most security professionals would agree that relying only upon a username and password would be unwise, which highlights why authenticated vulnerability scanning must be a part of any Zero Trust strategy.

These authenticated scans remove the blind spot and provide insight into the true level of risk of an application or system. Once an attacker has made it onto a system, even if the account compromised has minimal privileges, other exploits may easily allow for additional actions to be taken utilizing the initial target as a jump point. Common exploits include privilege escalation or the ability to gain further visibility to other assets for pivot opportunities to spread deeper into the network, or to more critical systems. By implementing authenticated scans, these vulnerabilities can be more easily identified, and fixes or mitigations can be assessed to ensure that the risk to the organization is both understood and minimized or eliminated, if possible.

Systems such as multifactor authentication or passwordless authentications that rely upon hardware security keys can make the implementation of authenticated scans more difficult. It is important to thoroughly evaluate the scanning tools to be used to ensure that they are successfully navigating these hurdles and performing full authenticated scans against the potential targets. Some scanners may report a successful scan, dependent on configuration, even if part of the authentication fails or the entire scanning session does not maintain authentication. It is therefore imperative that the scanning platform is accurately assessed and that threat feeds are updated and regularly reviewed to ensure that configurations meet the vendor best practices and are providing the visibility expected by the organization. In certain cases, it may be appropriate to leverage multiple scanning platforms or related tool sets, such as endpoint protection systems, dependent on network and application architecture.

Unauthenticated vulnerability scanning essentially provides a “public” view of potential vulnerabilities that may exist on the scanned system. This view represents what a malicious attacker would have access to without user credentials. These scans typically discover fewer vulnerabilities because they don’t have access to user-level services.

Database Change

Acting as critical repositories of data regularly accessed by both employees and customers, databases are some of the most important knowledge repositories of an organization and may be commonly referred to as the “crown jewels” of the organization. The content of these databases can vary greatly, such as internal employee data for HR teams, product designs, customer data generated from an ERP system, company financials collected for accounting and executive teams, and system audit logs utilized by IT teams.

The scope and breadth of these databases means that many tend to be both very large in size and numerous in count for most organizations. Both their criticality to the smooth functioning of an organization, as well as their size and scope, can make them enticing targets for an attacker and are critical for organizations to ensure the integrity and confidentiality of the data stored. Data integrity and confidentiality are critical for ensuring that business decisions are made from sound data sources. By controlling risk and unauthorized access surrounding databases, the organization is protected from fines being applied by regulating bodies. Database change monitoring is therefore a critical component of Zero Trust to ensure that data is reliable and available when needed.

A Zero Trust strategy must incorporate robust monitoring of database systems to monitor for unexpected changes to any database, whether it be malicious or inadvertent to identify threats both due to a targeted attack as well as misconfigurations or other user errors that might introduce problems into the database or its operation. These monitoring systems must be able to quickly detect the changes in behavior and help to take action to ensure that any impact to the organization is minimized as much as possible. Monitoring database changes can also help to act as a check and balance for other security controls, such as monitoring for the source IP address of an administrator accessing the database and alert if that connection attempt does not take place from a jump box authorized for such a connection.

The selected database change monitoring tool must be able to correlate across multiple databases regardless of their type or location, providing alerts based on the actual usage patterns of the organization to their data rather than the individual database itself. It must also provide an appropriate reporting mechanism that can direct alerts into the organization’s chosen ticketing system when human intervention is necessary to further analyze or respond to a detected event. Some systems may also provide other features such as data insights regarding volume and context of data within each database, which can assist with audit scoping. Other features may also include the ability to classify the data stored based on regulatory labels, policies, and vulnerability notifications for the database software itself. Database change solutions may integrate with privileged identity systems to control access end to end with controls applied to specific database fields.

Enforcement

Enforcement is the ability of an organization to implement Policy & Governance rules using solutions, methods, and attributes to restrict and control access to objects within the organization. The ability to enforce policy is a key result of Zero Trust. Building on the Security Capabilities of Zero Trust covered in this chapter, the Enforcement pillar builds controls over the concepts described in Policy & Governance, Vulnerability Management, Identity, and Analytics.

Cloud Access Security Broker (CASB)

A Cloud Access Security Broker typically sits between a specific network and a public cloud provider and promotes the use of an access gateway. These gateways provide information about how the cloud service might be used, and also govern access as an enforcement point. CASBs attempt to provide access control through familiar or traditional enterprise security approaches.

Further, CASBs are typically offered in an X-as-a-Service model at the front door to a cloud presence. This capability allows movements of workloads into a cloud-hosted model while helping to track and manage entity behavior. CASBs can also help to monitor what data flows in through the network-to-network interconnection (NNI). One example of this enforcement control is to allow only encrypted traffic into specific zones.

A CASB can also be useful in dealing with “shadow IT.” Due to the ease of setting up a tenant or subscription on a cloud provider, many business units may decide to bypass normal IT processes to obtain cloud-based services on their own, leaving IT with a massive blind spot. CASBs can help by monitoring traffic between an organization’s network and cloud service providers to bring these out-of-standard groups into focus and allowing for IT to remediate. This same visibility also allows for some reporting capabilities on the usage patterns of cloud systems by the organization.

Distributed Denial of Service (DDOS)

A denial of service (DoS) or distributed denial of service (DDoS) is a cyber attack that is used to attack an organization by denying access to critical resources. This kind of attack may negatively impact customers, employees, businesses, or third parties given the scope. DoS attacks can originate from anywhere. These attack vectors represent the inability for a targeted system to be used the way in which it was intended.

For networks, intended use relies on a working control plane and a working data plane. The interruption of either could impede the system from working as expected or designed. Most systems that attempt to offer any sort of protection in this area are based on the ability to realize an attack via a signature, which defines the patterns observed in another organization. If the organization is the first to observe the attack “in the wild,” then the organization needs solutions to help redirect the traffic to minimize impact via a “sandbox” or other attack response process.

When multiple systems are networked together toward a target, this is known as distributed denial of service (DDoS). The primary difference between a DoS and DDoS is that the organization being targeted may be attacked from many locations at one time. Typically, DDoS attacks are more difficult to mitigate or remediate when compared to single-source DoS attacks.

Data Loss Prevention (DLP)

Data loss prevention is an enforcement point that controls and prevents the loss, misuse, or ability to access data or the intellectual property of an organization. Data is the “crown jewels” of the organization and must be protected using many capabilities and controls.

DLP programs control information creation, movement, storage, backup, and destruction. When the organization maintains inventories of data at rest, having visibility of where this data goes and where the data is allowed to go must be monitored. This data movement implies visibility over networks, static devices, mobile devices, and removable media. Also, DLP programs control what and how data will be retained or destroyed. Strategies for DLP should be developed and approved before technology solutions are employed to control the data.

Domain Name System Security (DNSSEC)

Domain Name Systems (DNS) represent how humans or machines interact with one another. DNS translates domain names to IP addresses so Internet resources can be used. DNSSEC is a protocol extension to DNS that authenticates and/or inspects DNS traffic to maintain policy or protect systems from accessing resources they should not be allowed to access. A DNSSEC system can also be used to protect attackers from manipulating or poisoning responses to DNS requests.

Email Security

Email security represents the ability of an organization to protect users from receiving malicious emails or preventing attackers from gaining access to critical data stores or conducting attacks (for example, ransomware attacks.) Email security typically complements any ability to prevent data loss by monitoring outbound email.

Email is a common threat vector that enables attackers to communicate to end users who may not have security threat awareness practices at the top of their minds. It is important to remove malicious emails using security solutions prior to an end user interacting with the email to reduce risk to the organization.

Firewall

A firewall is a network security device that monitors incoming and outgoing boundary network data traffic and decides whether to allow or block specific traffic based on a predefined set of security rules. The general purpose of a firewall is to establish a barrier between computer networks with distinct levels of trust. The most common use of a firewall is to protect a company's internal trusted networks from the untrusted Internet. Firewalls can be implemented in a hardware-, virtual-, or software-based form factor. The four types of firewalls are as follows:

Packet Filtering: Packet filtering firewalls are the most common type of firewalls. They will inspect a data packet’s source and destination IP addresses to see if they match predefined permitted security rules to determine if the packet should be able to enter the targeted network. Packet filtering firewalls can be further subdivided into two classes: stateless and stateful. Stateless firewalls inspect data packets without regard to what packets came before it; therefore, they do not evaluate packets based on context. Stateful firewalls remember information of previous packets and can then make operations more reliable and secure, with faster permit or deny decisions.

Next Generation: Next-generation firewalls (NGFWs) can combine traditional packet filtering with other advanced cybersecurity functions including encrypted packet inspection, antivirus signature identification, and intrusion prevention. These additional security functions are accomplished primarily through what is referred to as deep packet inspection (DPI). DPI allows a firewall to look deeper into a packet beyond source and designation information. The firewall can inspect the actual payload data within the packets, and packets can be further categorized and stopped if malicious data is identified.

Network Address Translation: Network Address Translation (NAT) firewalls map a packet’s IP address to another IP address by changing the packet header while in transit via the firewall. Firewalls can then allow multiple devices with distinct IP addresses to connect to the Internet utilizing a single IP address. The advantage of using NAT is that it allows a company’s internal IP addresses to be obscured to the outside world. While a firewall can be dedicated to the purpose of NAT, this function is typically included in most other types of firewalls.

Stateful Multilayer Inspection: Stateful multilayer inspection (SMLI) firewalls utilize deep packet inspection (DPI) to then examine all seven layers of the Open Systems Interconnection (OSI) model. This functionality allows an SMLI firewall to compare a given packet to known states of trusted packets and their trusted sources.

Intrusion Prevention System (IPS)

An intrusion prevention system is a hardware- or software-based security system that can continuously monitor a network for malicious or unauthorized activity. If such an activity is identified, the system can take automated actions, which can include reporting to administrators, dropping the associated packets, blocking traffic from the source, or resetting the transmission connection. An IPS is considered more advanced than an intrusion detection system (IDS), which can also monitor but can only alert administrators.

An IPS is utilized by placing the system in-line for the purpose of enabling inspection of data packets in real time as they traverse between sources and destinations across a network. An IPS can inspect traffic based on one of three methods:

Signature-based: The signature-based inspection method focuses on matching data traffic activity to well-known threats (signatures). This method works well against known threats but is not able to identify new threats.

Anomaly-based: Anomaly-based inspection searches for abnormal traffic behavior by comparing network activity against approved baseline behavior. This method typically works well against advanced threats (sometimes referred to as zero-day threats).

Policy-based: Policy-based inspection monitors traffic against predefined security policies. Violations of these policies result in blocked connections. This method requires detailed administrator setup to define and configure the required security policies.

These IPS inspection methods are then utilized in single or layered combination methods on one of the system’s platforms:

Network Intrusion Prevention System (NIPS): A NIPS is used in the previously mentioned in-line real-time method and is installed strategically to monitor traffic for threats.

Host Intrusion Prevention Systems (HIPS): A HIPS is installed on an object, which can typically include endpoints and workloads. Inspection of inbound and outbound traffic is limited to this single object.

Network Behavior Analysis (NBA): An NBA system is also installed strategically on a network and inspects data traffic to identify anomalous traffic (such as DDoS attacks).

Wireless Intrusion Prevention System (WIPS): A WIPS primarily functions the same as a NIPS except that it is specialized to work on Wi-Fi networks. The WIPS can also identify malicious activities directed exclusively on Wi-Fi networks.

IPS security technology is an important part of a Zero Trust Architecture. It is through IPS capabilities and by automating quick threat response tactics that most serious security attacks are prevented. While an IPS can be a dedicated network security system, these IPS functions can also be incorporated in firewalls such as the NGFW and SMLI systems.

Proxy

A proxy acts as an obfuscation and control intermediary between end users and objects to protect organizational data from misuse, attack, or loss.

Proxies are deployed in several circumstances, but for most organizations, there are two primary use cases. One is a proxy to the Internet, where the proxy is placed in-line between the corporate user community and the Internet. These proxy services are often combined with other control capabilities to provide secure web gateway, email security, DLP and other outbound traffic, to the Internet traffic controls. This set of controls can be located on-premises or could be cloud-based. Policy enforcement controls can then be employed on all outbound Internet traffic. Policy enforcement through a proxy can then impact which sites and services can be accessed, whether files can be transferred, what user identity attribution can be gleaned, or which network path is taken, to name a few.

The second common use case is a reverse proxy, where control is placed in front of offered services (that is, intranet and/or Internet) where the proxy acts as an intermediary between application front-end services and the user community. Reverse proxy services often supply load balancing, encryption off-loading from application front ends, performance-related caching, and AAA of sessions and users.

With the current evolution of general network architectures, where users and services can be located anywhere, the function and location of a proxy have an important role in a Zero Trust Architecture. Corporate users cross a boundary to communicate with Internet-based cloud and SaaS services on a routine basis. Internet-based users cross a boundary to access private cloud and corporate data center services. These boundaries are not only key policy enforcement points, but they are also opportunities to derive attribution from endpoints, users, and workloads. This attribution can be used to determine the current posture of the objects involved in the connection request.

Virtual Private Network (VPN)

A virtual private network is a method to create an encrypted connection between trusted objects across the Internet or untrusted networks and is an important method to be leveraged in Zero Trust Architecture designs. VPNs take many forms, from carrier-provided Multiprotocol Label Switching (MPLS) services to individual user-focused remote access (RA) VPNs.

If we look at this solution from a security controls perspective, VPNs can provide general traffic isolation and routing controls, which reduce the attack surface through broad control over where network packets can be forwarded. Remote access VPNs may also help organizations categorize use cases and policy definitions that may exist to identify users, endpoints, and functional groups.

If an organization were to make a full accounting of its various VPN deployments, it would document organizational constructs such as how MPLS VPN and Virtual Routing and Forwarding (VRF) may be deployed to isolate traffic across business units, divisions, or subsidiaries. It also would account for vendor, partner, and customer access mechanisms along with service and application access requirements.

Security Orchestration, Automation, and Response (SOAR)

Security orchestration, automation, and response or SOAR is set of solutions that enables an organization to visualize, monitor, and respond to security events. A SOAR is not a single tool, product, or function. The intention of a SOAR is to automate routine, repeatable, and time-consuming security-related tasks. The SOAR ties disparate systems together to provide a more complete picture of security events across multiple security platforms. A SOAR is used to improve an organization’s ability to identify and react to security events.

From a Zero Trust perspective, these capabilities can also be used to enable, update, and monitor Zero Trust policies across the entire security ecosystem. For example, orchestration capabilities utilized to tie vulnerability management systems with network access controls could allow for policy adjustments to be made based on discovered endpoint vulnerabilities where connecting devices with known vulnerabilities are no longer allowed to connect to the network until remediation occurs. Also, automation could be used to provide unattended remediation services to devices that have been flagged as untrustworthy.

File Integrity Monitor (FIM)

As an enforcement control applied to a Zero Trust architecture, a file integrity monitor provides the ability to detect potentially nefarious changes made to the files or file systems supporting services and applications. FIM capabilities are typically applied to server platforms but can be deployed across any platform with an accessible file system. File change detection and alerting could be used in a Zero Trust Architecture to affect the trust status of a system that has experienced recent changes. Zero Trust policy may direct sessions to be limited and/or restricted completely to or from systems where unexpected file changes have occurred.

To realize Zero Trust capabilities from this control, organizations must expend effort in setting baselines for known and expected behaviors. Administrators will then need to define which categorizations of file changes will trigger actions to isolate systems where change has been detected. Change detection policy and change detection alerting must then be translated into response plans and actions. This activity could be arduous and time-consuming but will result in less effort expended chasing false positives. Tying the FIM capabilities into a SOAR architecture can then result in automated isolation and remediation for impacted workloads.

Segmentation

Segmentation is the art of identifying and classifying sets of services, applications, endpoints, users, or functional classifications and isolating them from other sets of systems. This isolation is typically accomplished through various techniques that focus on network traffic controls. These sets of controls will vary depending on where they are applied and the classification of the assets being segmented. For example, isolating a corporate intranet from the Internet will require significantly more capabilities due to the scope and scale of business services that need to traverse this boundary. In contrast, isolating building management systems attached to the corporate network from general-purpose corporate workstations would be a “deny any” rule, assuming one can clearly identify building management systems and corporate workstations. The foundational process for identification and classification of corporate assets is essential to creating a Zero Trust Architecture, where defining segments or enclaves is used to establish trusts to other enclaves and sets of controls employed to protect sets of assets within an enclave.

Analytics Pillar

The Analytics group of Zero Trust Capabilities is an extremely important aspect of the Zero Trust deployment process. The need for analytics, like the ongoing need to continue to look for and gain more insight into anything identity based, is constant and ever evolving, with a need to sort through a massive amount of data sometimes likened to “noise” to find the data that indicates what is happening within the ecosystem.

Analytics comes in many forms and can be anything from the analytics associated with changes made to the network that may attempt to overcome the Zero Trust implementation, including tracking users and their actions on the network throughout their time both on and remotely connected to the network. Analytics about what threats are found within the network that provide more insight into how to detect these threats, and, of course how these threats were blocked will all come into play and will help overcome any reluctance that management, business units, operational staff, or administration staff have when it comes to the implementation.

Application Performance Monitoring (APM)

Application performance monitoring is the process of establishing data points on the performance of an application by observing the behavior from user interactions as well as via synthetic testing. These data points can be used to establish a baseline that can then be used to understand when the application is deviating from that baseline and requires investigation.

The data points collected can include CPU usage, error rates, response times or latency, how many instances of an application are running, request rates, user experience, and more. This data can also be utilized to ensure that an application is meeting a specified level of performance or availability as part of a service-level agreement (SLA). A well-rounded APM should be able to monitor not only down to the application code level but also across the infrastructure supporting the application to ensure a complete picture of the health and performance of an application. This means the APM solution setup process will need to include stakeholder decision-making on how to implement monitoring and tuning of the solution for optimal effect in each unique environment.

APM is a necessity for Zero Trust Architectures because users may access an application from various locations using disparate devices that may or may not be managed by the organization. When a user experiences a problem with an application, it is imperative that the operations and engineering teams can quickly understand whether the issue is related to the application itself or if there are factors beyond the organization’s control. This data is important to ensure that an unhealthy application is restored to a healthy state or, if outside factors are causing the issue, that the users are informed so they can adjust as necessary to improve their experience. As mentioned, APM can also provide a way to track application performance against a service-level agreement, so Software-as-a-Service offerings can be monitored to ensure that the organization is receiving the level of service they have agreed to with the vendor.

Finally, APM provides the ability to utilize synthetic tests, which are tests that the APM runs to simulate normal user behavior but in a repeatable fashion. These tests can be useful in periods of low user utilization or after a change to an application or its supporting systems to function as a check and balance. The output of these tests may help an organization quickly ascertain whether the changes made have had a meaningful negative impact to an application and allow for quicker resolution. Due to their repeatability by isolating as many variables as possible, synthetic tests run at regular intervals may also be able to highlight minor deviations that, if left unchecked, can turn into user-impacting issues. This enables the organization to proactively address the issue and keep the application in a healthy state to improve user satisfaction and improve organization efficiency.

Auditing, Logging, and Monitoring

Audit, logging, and monitoring are an ongoing process that takes in the identity and vulnerability assessment of an endpoint and attempts to link or align this assessment with what the user or device is doing on the network throughout its life cycle on the network. The challenge of logging and monitoring is the sheer number of devices and users who access the network on a regular basis, and the need to crunch vast amounts of data to validate and archive what users and devices are doing. In addition to the need for users to administer network devices through command issuance, upgrade, periodic reboot, and similar actions, the organization also must track the behaviors of users and devices as they then connect through the network access devices and the potential responses that are sent back to the actions taken by these devices.

The phrase “signal within the noise” has been used throughout this book without much detail on what that signal is that should be looked for and sorted through. After the identity of a user or device has been determined, the identity’s expected behavior is mapped out, actions are taken to determine the potential vulnerabilities that exist within that identity, and enforcement is applied to attempt to prevent that identity from communicating with resources that it is not meant to do so. What could arguably be considered the most ongoing labor-intensive aspect of the equation is now required. This aspect is the need to monitor the behavior of that user or device while validating that this behavior is expected and aligns with security policy.

Change Detection