Workload Optimizer is an analytical decision engine that generates actions that drive the IT environment toward a desired state where workload performance is assured and cost is minimized. In this sample chapter from Cisco Intersight: A Handbook for Intelligent Cloud Operations, you will explore how Workload Optimizer helps applications perform well while simultaneously minimizing cost (in a public cloud) and optimizing resource utilization (on premises) while also complying with workload policies.

Introduction

IT operations teams essentially have a prime directive against which their success is constantly measured: to deliver performant applications at the lowest possible cost while maintaining compliance with IT policies.

This goal is thwarted by the almost intractable complexity of modern application architectures—whether virtualized, containerized, monolithic or microservices based, on premises or public cloud, or a combination of them all—as well as the sheer scale of the workloads under management and the constraints imposed by licensing and placement rules. Having a handle on which components of which applications depend on which pieces of the infrastructure is challenging enough; knowing where a given workload is supposed to—or allowed to—run is more difficult still; knowing what specific decisions to make at any given time across the multicloud environment to achieve the prime directive is a Herculean task, beyond the scale of humans. As a result, this prime directive is oftentimes met with a brick wall.

Workload Optimizer—a separately licensed feature set within the Intersight platform—aims to solve this challenge through application resource management, ensuring that applications get the resources they need when they need them. Workload Optimizer helps applications perform well while simultaneously minimizing cost (in a public cloud) and optimizing resource utilization (on premises) while also complying with workload policies.

Traditional Shortfalls of IT Resource Management

The traditional method of IT resource management has fallen short in the modern data center. This process-based approach typically involves several steps:

Step 1. Setting static thresholds for various infrastructure metrics, such as CPU or memory utilization

Step 2. Generating an alert when these thresholds are crossed

Step 3. Relying on a human being viewing the alert to:

Determine whether the alert is anything to worry about. (What percentage of alerts on any given day are simply discarded in most IT shops? 70%? 80%? 90%?)

If the alert is worrisome, determine what action to take to push the metric back below the static threshold.

Step 4. Execute the necessary action and then lather, rinse, repeat.

This approach has significant fundamental flaws.

First, most such metrics are merely proxies for workload performance; they don’t measure the health of the workload itself. High CPU utilization on a server may be a positive sign that the infrastructure is well utilized and does not necessarily mean that an application is performing poorly. Even if the thresholds aren’t static but are centered on an observed baseline, there’s no telling whether deviating from the baseline is good or bad or simply a deviation from normal.

Second, most thresholds are set low enough to provide human beings time to react to an alert (after having frequently ignored the first or second notifications), meaning expensive resources are not used efficiently.

Third, and maybe most importantly, this approach relies on human beings to decide what to do with any given alert. An IT administrator must somehow divine from all current alerts not just which ones are actionable but which specific actions to take. These actions are invariably intertwined with and will affect other application components and pieces of infrastructure in ways that are difficult to predict. For example:

A high CPU alert on a given host might be addressed by moving a virtual machine (VM) to another host—but which VM?

Which other hosts?

Does that other host have enough memory and network capacity for the intended move?

Will moving that VM create more problems than it solves?

Multiply this analysis by every potential metric and every application workload in the environment, and the problem becomes exponentially more difficult.

Finally, usually the standard operating procedure is to clear an alert, but, as noted previously, any given alert is not a true indicator of application performance. As every IT administrator has seen time and again, healthy apps can generate red alerts, and “all green” infrastructures can still have poorly performing workloads. A different paradigm is needed, and Workload Optimizer provides such a paradigm.

Paradigm Shift

Workload Optimizer is an analytical decision engine that generates actions (recommendations that are optionally automatable in most cases) that drive the IT environment toward a desired state where workload performance is assured and cost is minimized. It uses economic principles (the fundamental laws of supply and demand) in a market-based abstraction to allow infrastructure entities (for example, hosts, VMs, containers, storage arrays) to shop for commodities such as CPU, memory, storage, or network resources.

This market analysis leads to actions. For example, a physical host that is maxed out on memory (high demand) would sell its memory at a high price to discourage new tenants, whereas a storage array with excess capacity would sell its space at a low price to encourage new workloads. While all this buying and selling takes place behind the scenes within the algorithmic model and does not correspond directly to any real-world dollar values, the economic principles are derived from the behaviors of real-world markets. These market cycles occur constantly, in real time, to ensure that actions are currently and always driving the environment toward the desired state. In this paradigm, workload performance and resource optimization are not an either/or proposition; in fact, they must be considered together to make the best decisions possible.

Workload Optimizer can be configured to either recommend or automate infrastructure actions related to placement, resizing, or scaling for either on-premises or cloud resources.

Users and Roles

Workload Optimizer leverages Intersight’s core user, privilege, and role-based access control functionality (described in Chapter 1, “Intersight Foundations”). Intersight administrators can assign various predefined privileges specific to Workload Optimizer (see Table 9-1) to a given Intersight role to allow for a division of privileges within an organization. By default, an Intersight administrator has full Administrator privileges in Workload Optimizer, and an Intersight read-only user is granted Observer privileges. Other Workload Optimizer privileges (for example, WO Advisor, WO Automator) must be explicitly assigned to a role via Settings > Roles.

Table 9-1 Privileges and Permissions

Workload Optimizer Privileges |

Permissions |

|---|---|

Workload Optimizer Observer |

Can view the state of the environment and recommended actions. Cannot run plans or execute any recommended actions. |

Workload Optimizer Advisor |

Can view all Workload Optimizer charts and data and run plans. Cannot reserve workloads or execute any recommended actions. |

Workload Optimizer Automator |

Can execute recommended actions and deploy workloads. Cannot perform administrative tasks. |

Workload Optimizer Deployer |

Can view all Workload Optimizer charts and data, deploy workloads, and create policies and templates. Cannot run plans or execute any recommended actions. |

Workload Optimizer Administrator |

Can access all Workload Optimizer features and perform administrative tasks to configure Workload Optimizer. |

Targets and Configuration

For Workload Optimizer to generate actions, it needs information to analyze. It accesses the information it needs via API calls to targets, as configured under the Admin tab (refer to Chapter 1). The information gathered from infrastructure targets—metadata, telemetry, and metrics—must be both current and actionable.

The number of possible data points available for analysis is effectively infinite, and Workload Optimizer gathers only data that has the potential to lead to or impact a decision. This distinction is important as it can help explain why a given target is or is not available or supported. In theory, anything with an API could be integrated as a target, but the key question would be “What decision would Workload Optimizer make differently if it had this information?”

One of the great advantages of this approach—and the economic abstraction that underpins the decision engine—is that it scales. Human beings are easily overwhelmed by data, and more data usually just means more noise that confuses the situation. In the case of Workload Optimizer’s intelligent decision engine, the more data it has from a myriad of heterogeneous sources, the smarter it gets. More data in this case means more signal and better decisions.

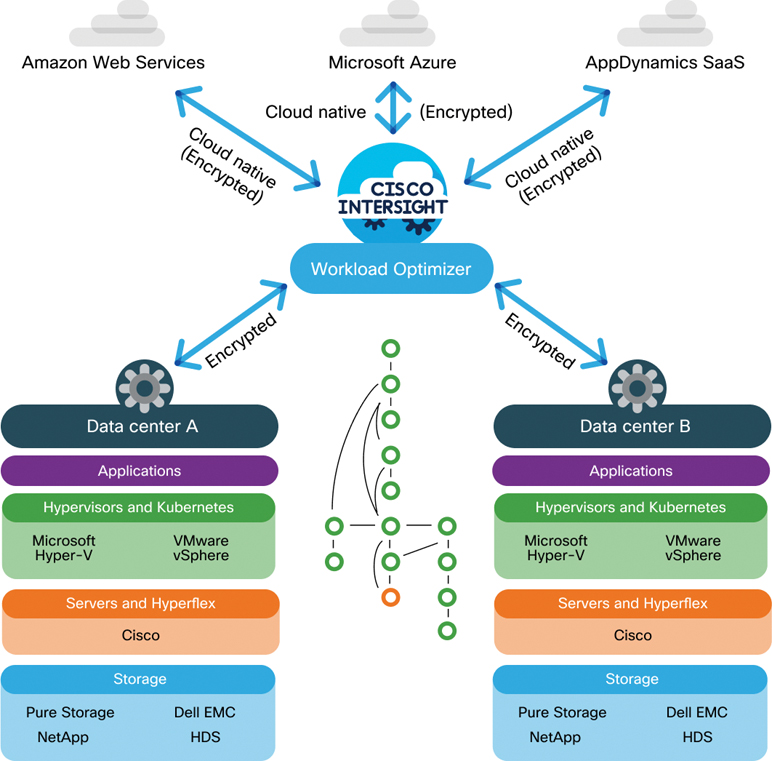

Workload Optimizer accesses its targets in three basic ways (see Figure 9-1):

Making direct API calls from the Intersight cloud to other cloud services and platforms such as Amazon Web Services, Microsoft Azure, and AppDynamics SaaS (that is, directly cloud to cloud)

Communicating directly with targets that natively run Device Connector

Via the Assist function of Intersight Appliance, which enables Workload Optimizer to communicate with on-premises infrastructure natively lacking Device Connector (that is, most third-party hardware and software) that otherwise would be inaccessible behind an organization’s firewall.

Figure 9-1 Communication with public cloud services and on-premises resources

It is therefore possible to use Workload Optimizer as a purely SaaS customer, as a purely on-premises customer, or as a mix of both.

While all communication to targets occurs via API calls, without any traditional agents required on the target side, Kubernetes clusters do require a unique setup step: deploying Kubernetes Collector on a node within the target cluster. Collector runs with a service account that has a cluster administrator role and runs Device Connector, essentially proxying communications to and commands from Intersight and the native cluster kubelet or node agent. In this respect, Collector allows the insertion of Device Connector into any Kubernetes cluster, whether on premises or in the public cloud.

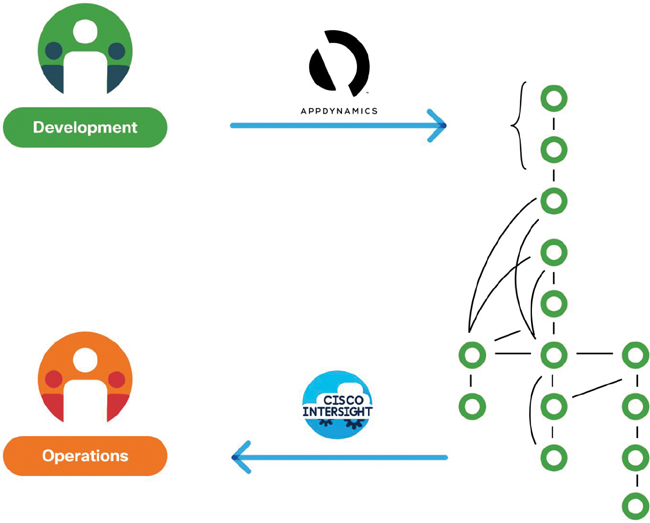

One of the richest sources of workload telemetry for Workload Optimizer comes from application performance management tools such as Cisco’s AppDynamics. As noted earlier, the core focus of Workload Optimizer is application resource management. However, for an application to truly perform well, it needs more than just the right physical resources at the right time; it also needs to be written and architected well.

AppDynamics provides developers and applications IT teams with a detailed logical dependency map of the application and its underlying services, fine-grained insight into individual lines of problematic code and poorly performing database queries and their impact on actual business transactions, and guidance in troubleshooting poor end-user experiences. Figure 9-2 illustrates the combination of application performance management (that is, assuring good code and architecture) and Workload Optimizer’s application resource management (that is, the right resources at the right time for the lowest cost).

Figure 9-2 AppDynamics integration into Workload Optimizer

Cisco Full Stack Observability (FSO) expands on the visibility, insights, and action capabilities of the Workload Optimizer and AppDynamics combination and enhances it with wide area network and end-user monitoring intelligence from Cisco ThousandEyes. The FSO solution currently addresses numerous critical business use cases, such as customer digital experience monitoring and cloud-native application monitoring, and more product integrations and use cases are on the way.

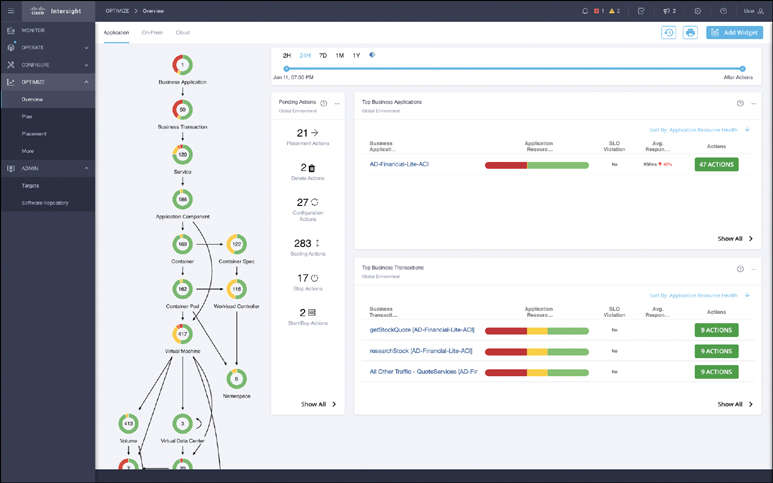

The Supply Chain

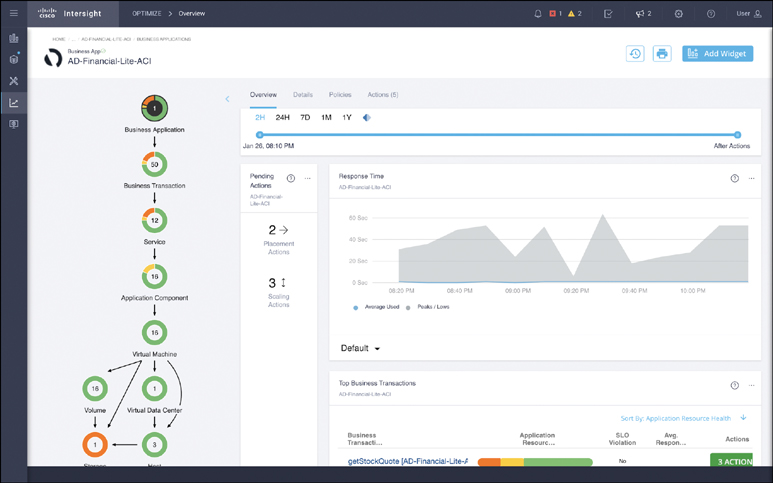

Workload Optimizer uses the information it gathers from targets to stitch together a logical dependency mapping of all entities in the customer environment, from the business application at the top to the containerized and/or virtualized workloads in the middle to physical hosts, storage, network, and facilities (or equivalent public cloud services) below. This mapping, called the supply chain (see Figure 9-3), is the primary means of navigation in Workload Optimizer.

Figure 9-3 The Workload Optimizer supply chain

The supply chain shows each known entity as a colored ring. The color of a ring indicates the current state of the entity in terms of pending actions—red if there are critical pending performance or compliance actions, orange for prevention actions, yellow for efficiency-related actions, and green if no actions are pending. The center of each ring displays the known quantity of the given entity, and the connecting arrows illustrate consumers’ dependencies on other providers.

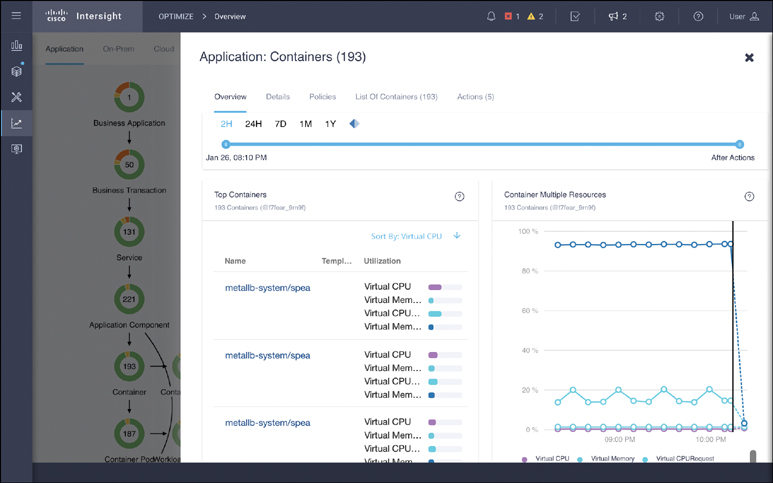

Clicking on a given entity in the supply chain opens a context-specific window with detailed information about that entity type. For example, in Figure 9-4, the Container entity in the supply chain has been selected, and you can see many container-relevant widgets, as well as a series of tabs for policies, actions, and so on.

Figure 9-4 Accessing additional details by clicking in the supply chain

Furthermore, the supply chain is dynamic, meaning that if a particular entity, such as a specific business application (for example, AD-Financial-Lite-ACI in Figure 9-5), is selected, the supply chain automatically reconfigures itself to depict just that single business application and only its related dependencies (including updating the color of the various rings and their respective entity counts). This is known as narrowing the scope of the supply chain view and is extremely helpful for focusing on a specific area of concern or work. Clicking on the Home link at the top-left of the Workload Optimizer screen or selecting Optimize > Overview on the main Intersight menu bar on the left returns you to the full scope of the supply chain.

Figure 9-5 Scoped supply chain view of a single application

Actions

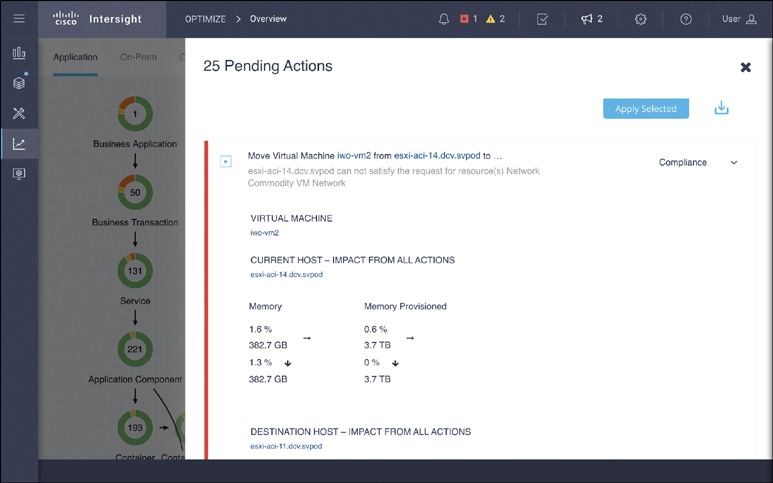

The essence of Workload Optimizer is action. Many infrastructure tools promise visibility, and some even provide some insights on top of that visibility. Workload Optimizer is designed to go further and act, in real time, to continuously drive the environment toward the desired state. Actions run the gamut from placement and scaling actions for workloads and storage to infrastructure start/stop/provision/decommission actions to public cloud purchase recommendations and many more.

Workload scaling and placement actions are often the most common and have the most impact. For VMs, Workload Optimizer leverages the underlying hypervisor to scale up or down resource capacity (for example, memory and CPU), reservations, and limits, as well as move VMs and datastores to nodes or clusters that can more optimally support their needs. Similarly, in Kubernetes environments, Workload Optimizer offers actions to right-size container vCPU and vMem requests and limits, move pods to different nodes to free up resources or avoid congestion, and so on. Keep in mind that the list of supported actions and their ability to be executed or automated via Workload Optimizer varies widely by target type and updates frequently. A current detailed list of actions and their execution support status via Workload Optimizer can be found in the Workload Optimizer Target Configuration Guide (http://cs.co/9006zF6Bi).

All actions follow an opt-in model; Workload Optimizer never takes an action unless given explicit permissions to do so, either via direct user input or through a custom policy. You can view a list of current actions via the Pending Actions dashboard widget in the supply chain view, via the Actions tab after clicking on a component in the supply chain, or in various scoped views and custom dashboards. Figure 9-6 shows an example of a specific move action.

Figure 9-6 Executing actions

Groups and Policies

When an organization first starts using Workload Optimizer, the number of pending actions can be significant, especially in a large, active, or poorly optimized environment. New organizations generally take a conservative approach initially and execute actions manually, verifying as they go that the actions are improving the environment and moving them closer to the desired state. Ultimately, though, the power of Workload Optimizer is best achieved through a judicious implementation of groups and policies to simplify the operating environment and to automate actions where possible.

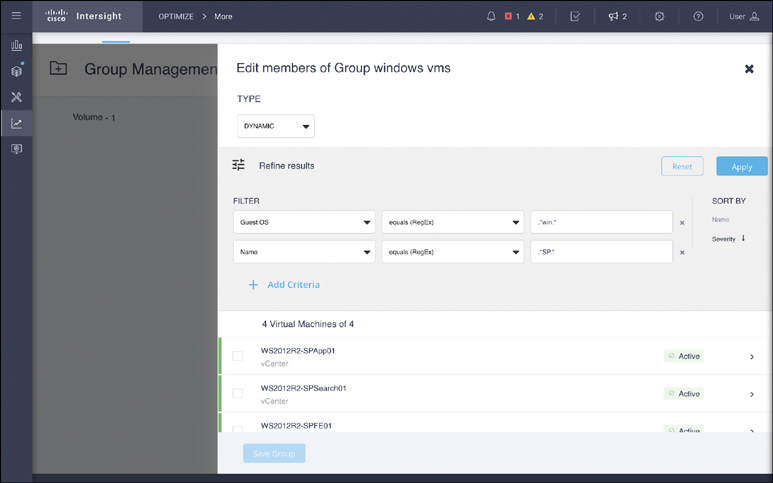

Groups

Workload Optimizer provides the capability of creating logical groups of resources (VMs, hosts, datastores, disk arrays, and so on) for ease of management, visibility, and automation. Groups can be either static (such as a fixed list of a named set of resources) or dynamic. Dynamic groups self-update their membership based on specific filter criteria—a query, effectively—to significantly simplify management. For example, you could create a dynamic group of VMs that belong to a specific application’s test environment, and you could further restrict membership of that group to just those running Microsoft Windows (see Figure 9-7, where .* is used as a catchall wildcard in the filter criteria).

Figure 9-7 Creating dynamic groups based on filter criteria

Generally, dynamic groups are preferred due to their self-updating nature. As new resources are provisioned or decommissioned, or as their status changes, their dynamic group membership adjusts accordingly, without any user input. This benefit is difficult to understate, especially in larger environments. You should use dynamic groups whenever possible and static groups only when necessary.

Groups can be used in numerous ways. From the search screen, you can select a given group and automatically scope it to just that group in the supply chain. This is a handy way to zoom in on a specific subset of the infrastructure in a visibility or troubleshooting scenario or to customize a given widget in a dashboard. Groups can also be used to easily narrow the scope of a given plan or placement scenario (as described in the next section).

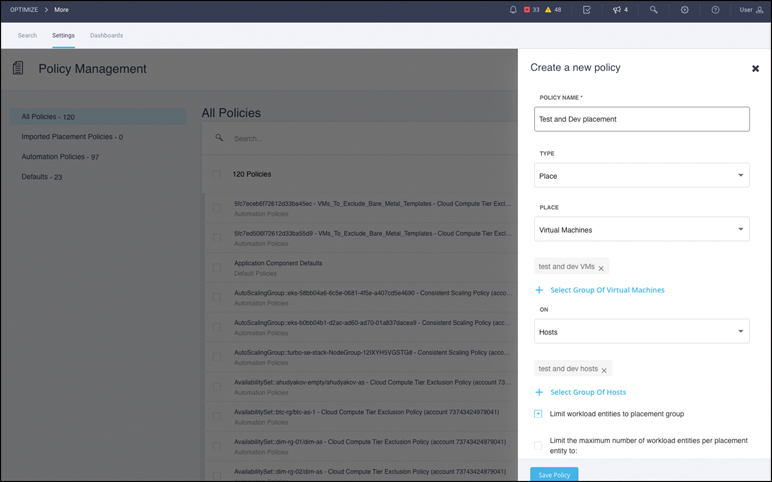

Policies

One of the most critical benefits of the use of groups arises when they are combined with policies. In Workload Optimizer, all actions are governed by one or more policies, including default global policies, user-defined custom policies, and imported placement policies. Policies provide extremely fine-grained control over the actions and automation behavior of Workload Optimizer.

Policies fall under two main categories: placement and automation. In both cases, groups (static or dynamic) are used to limit the scope of the policy.

Placement Policies

Placement policies govern which consumers (VMs, containers, storage volumes, data-stores) can reside on which providers (VMs, physical hosts, volumes, disk arrays).

Affinity/Anti-affinity Policies

The most common use for placement policies is to create affinity or anti-affinity rules to meet business needs. For example, say that you have two dynamic groups, both owned by the testing and development team: one of VMs and another of physical hosts. To ensure that the testing and development VMs always run on testing and development hosts, you can create a placement policy that enables this constraint in Workload Optimizer, as shown in Figure 9-8.

Figure 9-8 Creating a new placement policy

The constraint you put into the policy then restricts the underlying economic decision engine that generates actions. The buying decisions that the VMs within the testing and development group make when shopping for resources are restricted to just the testing and development hosts, even if there might be other hosts that could otherwise serve those VMs. You might similarly constrain certain workloads with a specific license requirement to only run on (or never run on) a given host group that is (or isn’t) licensed for that purpose.

Merge Policies

Another placement policy type that can be especially useful is a merge policy. Such a policy logically combines two or more groups of resources such that the economic engine treats them as a single, fungible asset when making decisions.

The most common example of a merge policy is one that combines one or more VM clusters such that VMs can be moved between clusters. Traditionally, VM clusters are siloed islands of compute resources that can’t be shared. Sometimes this is done for specific business reasons, such as separating accounting for different data center tenants; in other words, sometimes the silos are built intentionally. But many times, they are unintentional: The fragmentation and subsequent underutilization of resources is merely a by-product of the artificial boundary imposed by the infrastructure and hypervisor. In such a scenario, you can create a merge policy that logically joins multiple clusters’ compute and storage resources, enabling Workload Optimizer to consider the best location for any given workload without being constrained by cluster boundaries. This ultimately leads to optimal utilization of all resources in a continued push toward the desired state.

Automation Policies

The second category of policy in Workload Optimizer is automation policies, which govern how and when Workload Optimizer generates and executes actions. Like a placement policy, an automation policy is restricted to a specific scope of resources based on groups; however, unlike placement policies, automation policies can be restricted to run at specific times with schedules. Global default policies govern any resources that aren’t otherwise governed by another policy. It is therefore important to use extra caution when modifying a global default policy as any changes can be far-reaching.

Automation policies provide great control—either broad or extremely finely grained control—over the behavior of the decision engine and how actions are executed. For example, it’s common for organizations to enable nondisruptive VM resize-up actions for CPU and memory (for hypervisors that support such actions), but some organizations wish to further restrict these actions to specific groups of VMs (for example, testing and development only and not production), or to occur during certain pre-approved change windows, or to control the growth increment. Most critically, automation policies enable supported actions to be executed automatically by Intersight, eliminating the need for human intervention.

Best Practices

When implementing placement and automation policies, a crawl–walk–run approach is advisable.

Crawl

Crawling involves creating the necessary groups for a given policy, creating a policy scoped to those groups, and setting the policy’s action to manual so that actions are generated but not automatically executed.

This method provides administrators with the ability to double-check the group membership and manually validate that the actions are being generated as expected for only the desired groups of resources. Any needed adjustments can be made before manually executing the actions and validating that they do indeed move the environment closer to the desired state.

Walk

Walking involves changing an automation policy’s execution mechanism to automatic for relatively low-risk actions. The most common of these actions are VM and datastore placements, nondisruptive upsizing of datastores and volumes, and nondisruptive VM resize-up actions for CPU and memory.

Modern hypervisors and storage arrays can handle these actions with little to no impact on the running workloads, and automating them generally provides the greatest bang for the buck for most environments. More conservative organizations may want to begin automating a lower-priority subset of their resources (such as testing and development systems), as defined by groups. Combining these “walk” actions with a merge policy to join multiple clusters provides even more opportunity for optimization in a reasonably safe manner.

Run

Finally, running typically involves more complex policy interactions, such as schedule implementations, before- and after-action orchestration steps, and rollout of automation across the organization, including production environments.

During the run stage, it is critical to have well-defined groups that restrict unwanted actions. Many off-the-shelf applications such as SAP have extremely specific resource requirements that must be met to receive full vendor support. In such cases, organizations typically create a group, specific to an application, and add a policy for that group that disables all action generation for it, effectively telling Workload Optimizer to ignore the application. This can also be done for custom applications for which the development teams have similarly stringent resource requirements.

Planning and Placement

While the bulk of functionality built into Workload Optimizer is focused on acting in real time to continuously optimize, there are two additional modules: one that supports future-looking planning and another that supports workload placement.

Plan

Since the entire foundation of Workload Optimizer’s decision engine is its market abstraction governed by the economic laws of supply and demand, it is a straightforward exercise to ask what-if questions of the engine in the planning module.

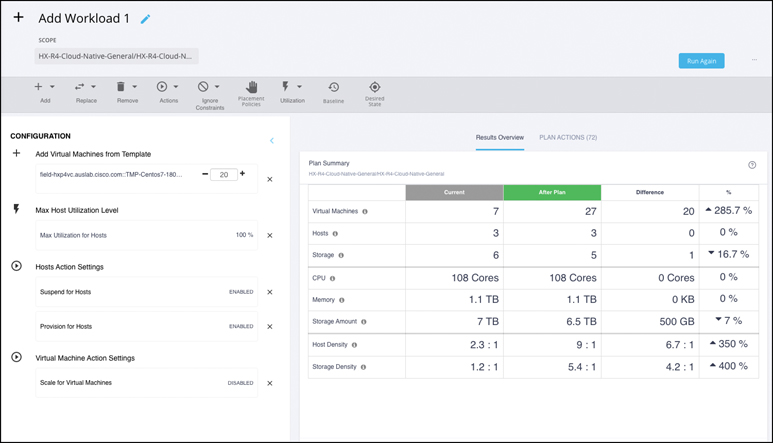

The planning function enables users to virtually change either the supply side (by adding or removing providers such as hosts or storage arrays) or the demand side (by adding or removing consumers such as VMs or containers) or both and then simulate the effect of the proposed change(s) on the live environment. Under the hood, this is a simple task for Workload Optimizer because it merely needs to run an extra market cycle with the new (simulated) input parameters. Just as in a live environment, the results of a plan (as shown in Figure 9-9) are actions.

Figure 9-9 Results of an added workload plan

Plans answer questions such as:

If four hosts were decommissioned, what actions would need to be taken to handle the workloads that they are currently running?

Does capacity exist elsewhere to handle the load, and if so, where should workloads be moved?

If there is not enough spare capacity, how much more and of what type will need to be bought/provisioned?

The planning in Workload Optimizer takes the concept of traditional capacity planning, which can be a relatively crude exercise in projecting historical trend lines into the future, to a new level: Workload Optimizer does its planning and tells you exactly what actions will need to be taken in response to a given set of changes to maintain the desired state. One of the most frequently used planning types is the Migrate to Cloud simulation, which is addressed in greater detail later in this chapter, in the section “The Public Cloud.”

Placement

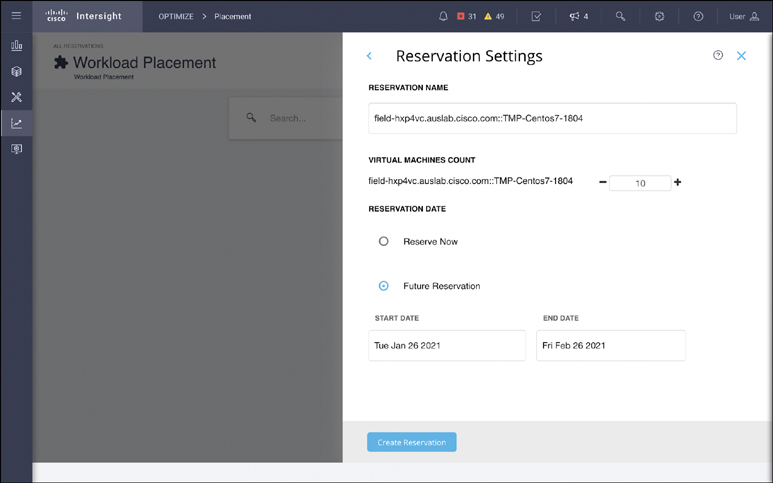

The placement module in Workload Optimizer is a variation on the planning theme but with real-world consequences. Placement reservations (see Figure 9-10) allow an administrator who knows that new workloads are coming into the environment soon to alter the demand side of all future market cycles, taking the yet-to-be-deployed workloads into account.

Figure 9-10 Creating a placement reservation

Such reservations force the economic engine to behave as if those workloads already exist, and the engine generates real actions accordingly. Reservations may therefore result in real changes to the environment if automation policies are active and/or real recommended pending actions such as VM movements and server or storage provisioning (to accommodate the proposed new workloads) are undertaken.

Using placement reservations is a great way to both plan for new workloads and to ensure that resources are available when those workloads are deployed. A handy feature of any placement reservation is the ability to delay making the reservation active until a point in the future, including the option of an end date for the reservation. This delays the effect of the reservation until a time closer to the actual deployment of the new workloads.

The Public Cloud

In an on-premises data center, infrastructure is generally finite in scale and fixed in cost. By the time a new physical host hits the floor, the capital has been spent and has taken its hit on the business’s bottom line. Thus, the desired state in an on-premises environment is to assure workload performance and maximize utilization of the sunk cost of capital infrastructure. In the public cloud, however, infrastructure is effectively infinite. Resources are paid for as they are consumed—usually from an operating expenses budget rather than a capital budget.

The underlying market abstraction in Workload Optimizer is extremely flexible, and it can easily adjust to optimize for the emphasis on operating expenses. In the public cloud, the desired state is to ensure workload performance and minimize spending. This is a subtle but key distinction, as minimizing spending in the public cloud does not always mean placing a workload in the cloud VM instance that perfectly matches its requirements for CPU, memory, storage, and so on; instead, it means placing that workload in the cloud VM template that results in the lowest possible cost while still ensuring performance.

On-Demand Versus Reserved Instances

The public cloud’s vast array of instance sizes and types offers endless choices for cloud administrators, all with slightly different resource profiles and costs. There are hundreds of different instance options in AWS and Azure, and new options and pricing are emerging almost daily. To further complicate matters, administrators have the option of consuming instances in an on-demand fashion—that is, in a pay-as-you-use model—or via reserved instances (RIs) that are paid for in advance for a specified term (usually a year or more). RIs can be incredibly attractive as they are typically heavily discounted compared to their on-demand counterparts, but they are not without pitfalls.

The fundamental challenge of consuming RIs is that public cloud customers pay for the RIs whether they use them or not. In this respect, RIs are more like the sunk cost of a physical server on premises than like the ongoing cost of an on-demand cloud instance. You can think of on-demand instances as being well suited for temporary or highly variable workloads—analogous to city dwellers taking taxis, which is usually cost-effective for short trips. RIs are akin to leasing a car, which is often the right economic choice for longer-term, more predictable usage patterns (such as commuting an hour to work each day). As the artifact changes, the flexibility of the underlying economic abstraction of Workload Optimizer is up to the challenge.

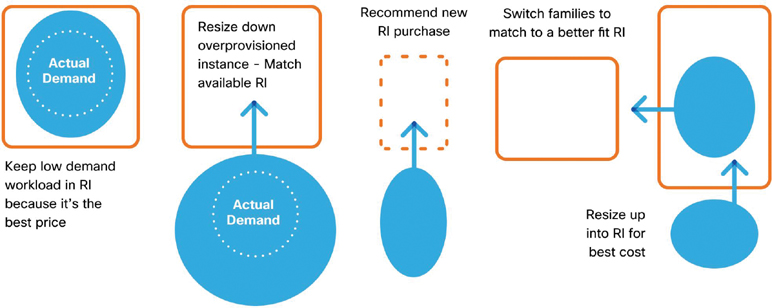

When faced with myriad instance options, cloud administrators are generally forced down one of two paths: Purchase RIs only for workloads that are deemed static and consume on-demand instances for everything else (hoping, of course, that static workloads really do remain that way) or choose a handful of RI instance types (for example, small, medium, and large) and shoehorn all workloads into the closest fit. Both methods leave a lot to be desired. In the first case, it’s not at all uncommon for static workloads to have their demand change over a year (or more) as new end users are added or new functionality comes online. In such cases, the workload needs to be relocated to a new instance type, and the administrator has an empty hole to fill in the form of the old, already paid-for RI (see the examples in Figure 9-11).

Figure 9-11 Fluctuating demand creates complexity with RI consumption

What should be done with that hole? What’s the best workload to move into it? Keep in mind that if that workload is coming from its own RI, the problem simply cascades downstream. The unpredictability and inefficiency of such headaches often negates the potential cost savings of RIs.

In the second scenario, limiting the RI choices almost by definition means mismatching workloads to instance types, negatively affecting either workload performance or cost savings or both. In either case, human beings, even with complicated spreadsheets and scripts, will invariably get the answer wrong because the scale of the problem is too large, and everything keeps changing all the time—so the analysis done last week or even yesterday is likely to be invalid today.

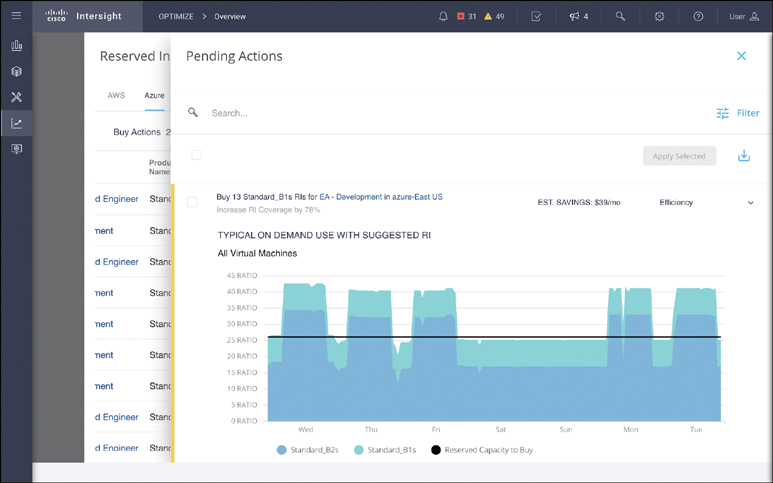

Thankfully, Workload Optimizer understands both on-demand instances and RIs in detail through its direct API target integrations. Workload Optimizer constantly receives real-time data on consumption, pricing, and instance options from cloud providers, and it combines this data with knowledge of applicable customer-specific pricing and enterprise agreements to determine the best actions available at any given point in time (see Figure 9-12).

Figure 9-12 A pending action to purchase additional RI capacity in Azure

Not only does Workload Optimizer understand current and historical workload requirements and an organization’s current RI inventory, but it can also intelligently recommend the optimal consumption of existing RI inventory and recommend additional RI purchases to minimize future spending. Continuing with the previous car analogy, in addition to knowing whether it’s better to pay for a taxi or lease a car in any given circumstance, Workload Optimizer can even suggest a car lease (RI purchase) that can be used as a vehicle for ride sharing (that is, fluidly moving on-demand workloads in and out of a given RI to achieve the lowest possible cost while still ensuring performance).

Public Cloud Migrations

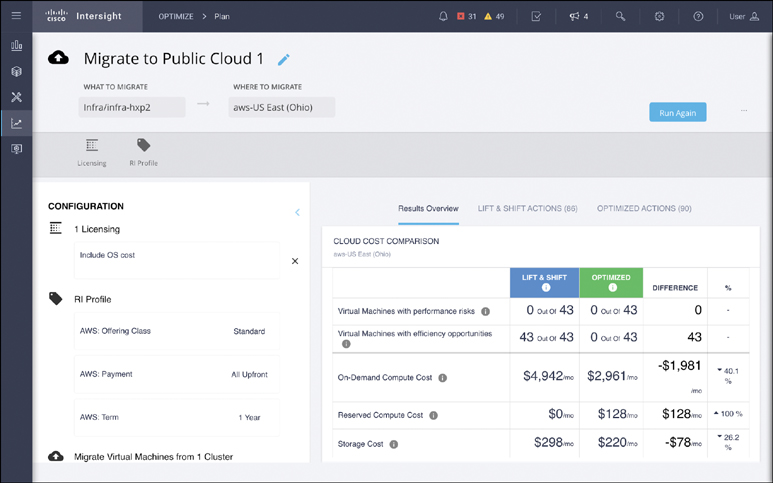

Finally, because Workload Optimizer understands both the on-premises and public cloud environments, it can bridge the gap between them. As noted in the previous section, the process of moving VM workloads to the public cloud can be simulated with a plan and the selection of specific VMs or VM groups to generate the optimal purchase actions required to run the workloads (see Figure 9-13).

Figure 9-13 Results of a cloud migration plan

The plan results offer two options: Lift & Shift and Optimized. The Lift & Shift column shows the recommended instances to buy and their costs, assuming no changes to the size of the existing VMs. The Optimized column allows for VM right-sizing in the process of moving to the cloud, which often results in a lower overall cost if current VMs are oversized relative to their workload needs. Software licensing (for example, bring your own versus buy from the cloud) and RI profile customizations are also available to further fine-tune the plan results.

Workload Optimizer’s unique ability to apply the same market abstraction and analysis to both on-premises and public cloud workloads in real time enables it to add value far beyond any cloud-specific or hypervisor-specific point-in-time tools that may be available. Besides being multivendor, multicloud, and real time by design, Workload Optimizer does not force administrators to choose between performance assurance and cost/resource optimization. In the modern application resource management paradigm of Workload Optimizer, performance assurance and cost/resource optimization are blended aspects of the desired state.

Summary

The flexibility and extensibility of the Intersight platform enable the rapid development of new features and capabilities. Additional hypervisor, application performance management, storage, and orchestrator targets are under development, as are additional reporting and application-specific support. Organizations find that the return on investment for Workload Optimizer is rapid as the cost savings it uncovers in the process of assuring performance and optimizing resources quickly exceed the license costs.