ACI is designed to allow small and large enterprises and service providers to build massively scalable data centers using a relatively small number of very flexible topologies. In this sample chapter from CCNP Data Center Application Centric Infrastructure 300-620 DCACI Official Cert Guide, the author team details the topologies with which an ACI fabric can be built or extended.

This chapter covers the following topics:

ACI Topologies and Components: This section describes the key hardware components and acceptable topologies for ACI fabrics.

APIC Clusters: This section covers available APIC hardware models and provides an understanding of APIC cluster sizes and failover implications.

Spine Hardware: This section addresses available spine hardware options.

Leaf Hardware: This section outlines the leaf platforms available for deployment in ACI fabrics.

This chapter covers the following exam topics:

1.1 Describe ACI topology and hardware

6.1 Describe Multi-Pod

6.2 Describe Multi-Site

ACI is designed to allow small and large enterprises and service providers to build massively scalable data centers using a relatively small number of very flexible topologies.

This chapter details the topologies with which an ACI fabric can be built or extended. Understanding supported ACI topologies helps guide decisions on target-state network architecture and hardware selection.

Each hardware component in an ACI fabric performs a specific set of functions. For example, leaf switches enforce security rules, and spine switches track all endpoints within a fabric in a local database.

But not all ACI switches are created equally. Nor are APICs created equally. This chapter therefore aims to provide a high-level understanding of some of the things to consider when selecting hardware.

“Do I Know This Already?” Quiz

The “Do I Know This Already?” quiz allows you to assess whether you should read this entire chapter thoroughly or jump to the “Exam Preparation Tasks” section. If you are in doubt about your answers to these questions or your own assessment of your knowledge of the topics, read the entire chapter. Table 2-1 lists the major headings in this chapter and their corresponding “Do I Know This Already?” quiz questions. You can find the answers in Appendix A, “Answers to the ‘Do I Know This Already?’ Questions.”

Table 2-1 “Do I Know This Already?” Section-to-Question Mapping

Foundation Topics Section |

Questions |

|---|---|

ACI Topologies and Components |

1–5 |

APIC Clusters |

6 |

Spine Hardware |

7, 8 |

Leaf Hardware |

9, 10 |

An ACI fabric is being extended to a secondary location to replace two top-of-rack switches and integrate a handful of servers into a corporate ACI environment. Which solution should ideally be deployed at the remote location if the deployment of new spines is considered cost-prohibitive and direct fiber links from the main data center cannot be dedicated to this function?

ACI Multi-Site

ACI Remote Leaf

ACI Multi-Tier

ACI Multi-Pod

Which of the following is a requirement for a Multi-Pod IPN that is not needed in an ACI Multi-Site ISN?

Increased MTU support

OSPF support on last-hop routers connecting to ACI spines

End-to-end IP connectivity

Multicast PIM-Bidir

Which of the following connections would ACI definitely block?

APIC-to-leaf cabling

Leaf-to-leaf cabling

Spine-to-leaf cabling

Spine-to-spine cabling

Which of the following are valid reasons for ACI Multi-Site requiring more specialized spine hardware? (Choose all that apply.)

Ingress replication of BUM traffic

IP fragmentation

Namespace normalization

Support for PIM-Bidir for multicast forwarding

Which of the following options best describes border leaf switches?

Border leaf switches provide Layer 2 and 3 connectivity to outside networks.

Border leaf switches connect to Layer 4–7 service appliances, such as firewalls and load balancers.

Border leaf switches are ACI leaf switches that connect to servers.

Border leaf switches serve as the border between server network traffic and FCoE storage traffic.

Which of the following statements is accurate?

A three-node M3 cluster of APICs can scale up to 200 leaf switches.

Sharding is a result of the evolution of what is called horizontal partitioning of databases.

The number of shards distributed among APICs for a given attribute is directly correlated to the number of APICs deployed.

A standby APIC actively synchronizes with active APICs and has a copy of all attributes within the APIC database at all times.

Out of the following switches, which are spine platforms that support ACI Multi-Site? (Choose all that apply.)

Nexus 93180YC-EX

Nexus 9364C

Nexus 9736C-FX line card

Nexus 9396PX

Which of the following is a valid reason for upgrading a pair of Nexus 9336PQ ACI switches to second-generation Nexus 9332C spine hardware? (Choose all that apply.)

Namespace normalization for ACI Multi-Site support

Support for 40 Gbps leaf-to-spine connectivity

Support for CloudSec

Support for ACI Multi-Pod

True or false: The Nexus 93180YC-FX leaf switch supports MACsec.

True

False

Which of the following platforms is a low-cost option for server CIMC and other low-bandwidth functions that rely on RJ-45 connectivity?

Nexus 9336C-FX2

Nexus 93180YC-FX

Nexus 9332C

Nexus 9348GC-FXP

ACI Topologies and Components

Like many other current data center fabrics, ACI fabrics conform to a Clos-based leaf-and-spine topology.

In ACI, leaf and spine switches are each responsible for different functions. Together, they create an architecture that is highly standardized across deployments. Cisco has introduced several new connectivity models and extensions for ACI fabrics over the years, but none of these changes break the core ACI topology that has been the standard from day one. Any topology modifications introduced in this section should therefore be seen as slight enhancements that help address specific use cases and not as deviations from the standard ACI topology.

Clos Topology

In his 1952 paper titled “A Study of Non-blocking Switching Networks,” Bell Laboratories researcher Charles Clos formalized how multistage telephone switching systems could be built to forward traffic, regardless of the number of calls served by the overall system.

The mathematical principles proposed by Clos also help address the challenge of needing to build highly scalable data centers using relatively low-cost switches.

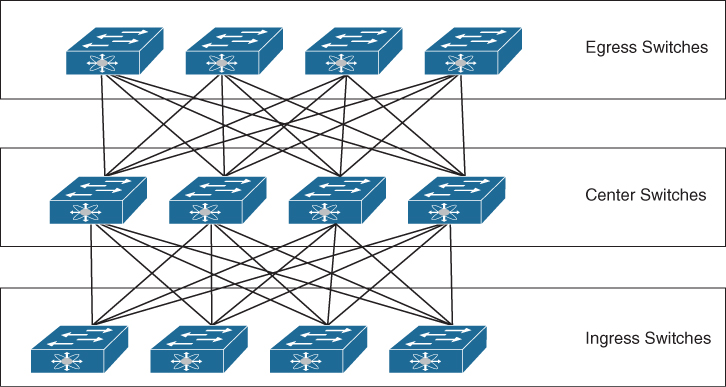

Figure 2-1 illustrates a three-stage Clos fabric consisting of one layer for ingress traffic, one layer for egress traffic, and a central layer for forwarding traffic between the layers. Multistage designs such as this can result in networks that are not oversubscribed or that are very close to not being oversubscribed.

Figure 2-1 Conceptual View of a Three-Stage Clos Topology

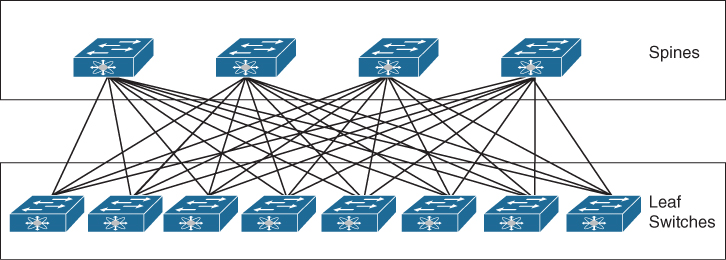

Modern data center switches forward traffic at full duplex. Therefore, there is little reason to depict separate layers for ingress and egress traffic. It is possible to fold the top layer from the three-tier Clos topology in Figure 2-1 into the bottom layer to achieve what the industry refers to as a “folded” Clos topology, illustrated in Figure 2-2.

Figure 2-2 Folded Clos Topology

As indicated in Figure 2-2, a leaf switch is an ingress/egress switch. A spine switch is an intermediary switch whose most critical function is to perform rapid forwarding of traffic between leaf switches. Leaf switches connect to spine switches in a full-mesh topology.

Standard ACI Topology

An ACI fabric forms a Clos-based spine-and-leaf topology and is usually depicted using two rows of switches. Depending on the oversubscription and overall network throughput requirements, the number of spines and leaf switches will be different in each ACI fabric.

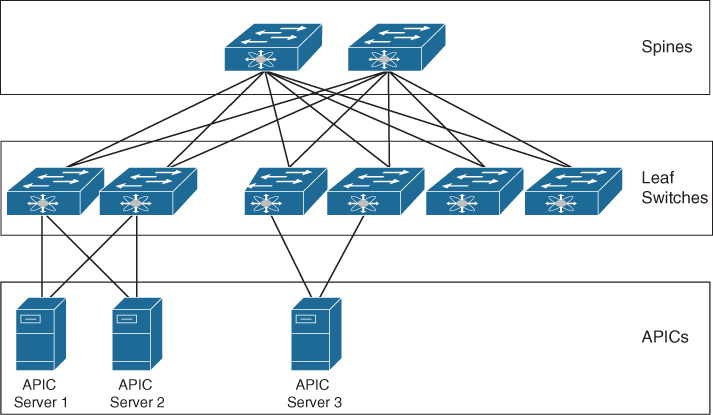

Figure 2-3 shows the required components and cabling for an ACI fabric. Inheriting from its Clos roots, no cables should be connected between ACI leaf switches. Likewise, ACI spines being cross-cabled results in ACI disabling the cross-connected ports. While the topology shows a full mesh of cabling between the spine-and-leaf layers, a fabric can operate without a full mesh. However, a full mesh of cables between layers is still recommended.

Figure 2-3 Standard ACI Fabric Topology

In addition to optics and cabling, the primary hardware components required to build an ACI fabric are as follows:

Application Policy Infrastructure Controllers (APICs): The APICs are the brains of an ACI fabric and serve as the single source of truth for configuration within the fabric. A clustered set of (typically three) controllers attaches directly to leaf switches and provides management, policy programming, application deployment, and health monitoring for an ACI fabric. Note in Figure 2-3 that APICs are not in the data path or the forwarding topology. Therefore, the failure of one or more APICs does not halt packet forwarding. An ACI fabric requires a minimum of one APIC, but an ACI fabric with one APIC should be used only for lab purposes.

Spine switches: ACI spine switches are Clos intermediary switches that have a number of key functions. They exchange routing updates with leaf switches via Intermediate System-to-Intermediate System (IS-IS) and perform rapid forwarding of packets between leaf switches. They provide endpoint lookup services to leaf switches through the Council of Oracle Protocol (COOP). They also handle route reflection to leaf switches using Multiprotocol BGP (MP-BGP), allowing external routes to be distributed across the fabric regardless of the number of tenants. (All three of these are control plane protocols and are covered in more detail in future chapters.) Spine switches also serve as roots for multicast trees within a fabric. By default, all spine switch interfaces besides the mgmt0 port are configured as fabric ports. Fabric ports are the interfaces that are used to interconnect spine and leaf switches within a fabric.

Leaf switches: Leaf switches are the ingress/egress points for traffic into and out of an ACI fabric. As such, they are the connectivity points for endpoints, including servers and appliances, into the fabric. Layer 2 and 3 connectivity from the outside world into an ACI fabric is also typically established via leaf switches. ACI security policy enforcement occurs on leaf switches. Each leaf switch has a number of high-bandwidth uplink ports preconfigured as fabric ports.

In addition to the components mentioned previously, optional hardware components that can be deployed alongside an ACI fabric include fabric extenders (FEX). Use of FEX solutions in ACI is not ideal because leaf hardware models currently on the market are generally low cost and feature heavy compared to FEX technology.

FEX attachment to ACI is still supported to allow for migration of brownfield gear into ACI fabrics. The DCACI 300-620 exam does not cover specific FEX model support, so neither does this book.

Engineers may sometimes dedicate two or more leaf switches to a particular function. Engineers typically evaluate the following categories of leaf switches as potential options for dedicating hardware:

Border Leaf: Border leaf switches provide Layer 2 and 3 connectivity between an ACI fabric and the outside world. Border leaf switches are sometimes points of policy enforcement between internal and external endpoints.

Service Leaf: Service leaf switches are leaf switches that connect to Layer 4–7 service appliances, such as firewalls and load balancers.

Compute Leaf: Compute leaf switches are ACI leaf switches that connect to servers. Compute leaf switches are points of policy enforcement when traffic is being sent between local endpoints.

IP Storage Leaf: IP storage leaf switches are ACI leaf switches that connect to IP storage systems. IP storage leaf switches can also be points of policy enforcement for traffic to and from local endpoints.

There are scalability benefits associated with dedicating leaf switches to particular functions, but if the size of the network does not justify dedicating leaf switches to a function, consider at least dedicating a pair of leaf switches as border leaf switches. Service leaf functionality can optionally be combined with border leaf functionality, resulting in the deployment of a pair (or more) of collapsed border/service leaf switches in smaller environments.

Cisco publishes a Verified Scalability Guide for each ACI code release. At the time of this writing, 500 is considered the maximum number of leaf switches that can be safely deployed in a single fabric that runs on the latest code.

ACI Stretched Fabric Topology

A stretched ACI fabric is a partially meshed design that connects ACI leaf and spine switches distributed in multiple locations. The stretched ACI fabric design helps lower deployment costs when full-mesh cable runs between all leaf and spine switches in a fabric tend to be cost-prohibitive.

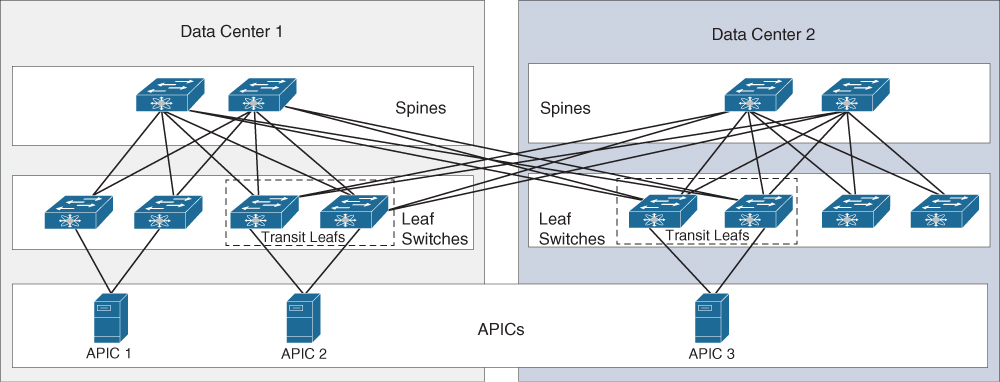

Figure 2-4 shows a stretched ACI fabric across two sites.

Figure 2-4 ACI Stretched Fabric Topology

A stretched fabric amounts to a single administrative domain and a single availability zone. Because APICs in a stretched fabric design tend to be spread across sites, cross-site latency is an important consideration. APIC clustering has been validated across distances of 800 kilometers between two sites.

A new term introduced in Figure 2-4 is transit leaf. A transit leaf is a leaf switch that provides connectivity between two sites in a stretched fabric design. Transit leaf switches connect to spine switches in both sites. No special configuration is required for transit leaf switches. At least one transit leaf switch must be provisioned in each site for redundancy reasons.

While stretched fabrics simplify extension of an ACI fabric, this design does not provide the benefits of newer topologies such as ACI Multi-Pod and ACI Multi-Site and stretched fabrics are therefore no longer commonly deployed or recommended.

ACI Multi-Pod Topology

The ACI Multi-Pod topology is a natural evolution of the ACI stretched fabric design in which spine and leaf switches are divided into pods, and different instances of IS-IS, COOP, and MP-BGP protocols run inside each pod to enable a level of control plane fault isolation.

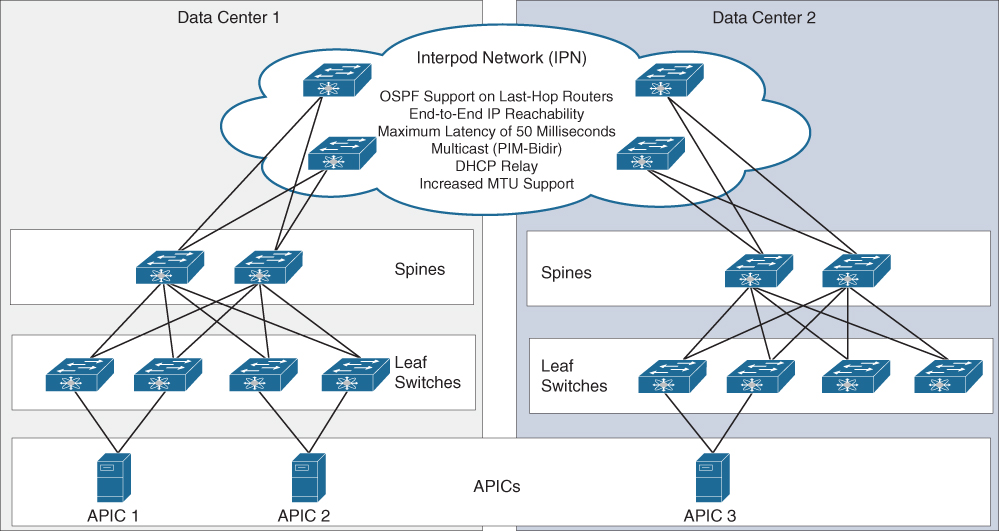

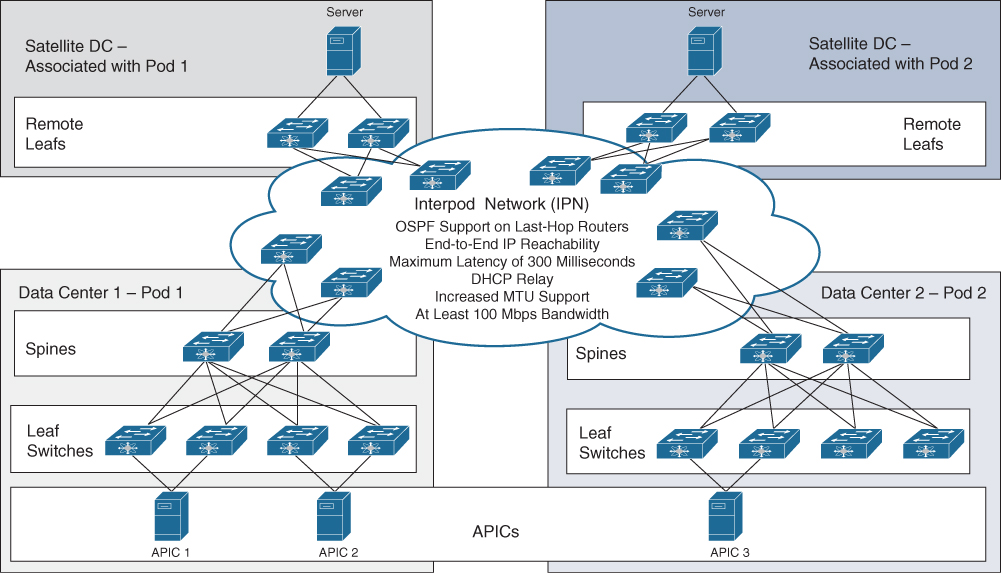

Spine switches in each pod connect to an interpod network (IPN). Pods communicate with one another through the IPN. Figure 2-5 depicts an ACI Multi-Pod topology.

An ACI Multi-Pod IPN has certain requirements that include support for OSPF, end-to-end IP reachability, DHCP relay capabilities on the last-hop routers that connect to spines in each pod, and an increased maximum transmission unit (MTU). In addition, a Multi-Pod IPN needs to support forwarding of multicast traffic (PIM-Bidir) to allow the replication of broadcast, unknown unicast, and multicast (BUM) traffic across pods.

One of the most significant use cases for ACI Multi-Pod is active/active data center design. Although ACI Multi-Pod supports a maximum round-trip time latency of 50 milliseconds between pods, most Multi-Pod deployments are often built to achieve active/active functionality and therefore tend to have latencies of less than 5 milliseconds.

Figure 2-5 ACI Multi-Pod Topology

On the issue of scalability, it should be noted that as of the time of writing, 500 is the maximum number of leaf switches that can be safely deployed within a single ACI fabric. However, the Verified Scalability Guide for the latest code revisions specifies 400 as the absolute maximum number of leaf switches that can be safely deployed in each pod. Therefore, for a fabric to reach its maximum supported scale, leaf switches should be deployed across at least 2 pods within a Multi-Pod fabric. Each pod supports deployment of 6 spines, and each Multi-Pod fabric currently supports the deployment of up to 12 pods.

Chapter 16, “ACI Anywhere,” covers ACI Multi-Pod in more detail. For now, understand that Multi-Pod is functionally a single fabric and a single availability zone, even though it does not represent a single network failure domain.

ACI Multi-Site Topology

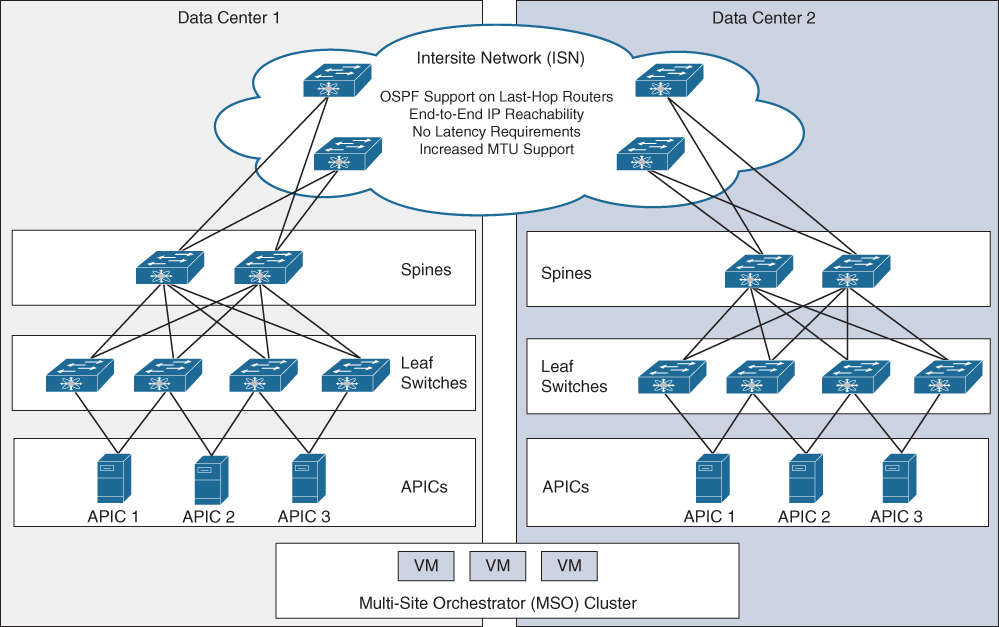

ACI Multi-Site is a solution that interconnects multiple ACI fabrics for the purpose of homogenous policy deployment across ACI fabrics, homogenous security policy deployment across on-premises ACI fabrics and public clouds, and cross-site stretched subnet capabilities, among others.

In an ACI Multi-Site design, each ACI fabric has its own dedicated APIC cluster. A clustered set of three nodes called Multi-Site Orchestrator (MSO) establishes API calls to each fabric independently and can configure tenants within each fabric with desired policies.

Figure 2-6 shows an ACI Multi-Site topology that leverages a traditional VM-based MSO cluster.

Figure 2-6 ACI Multi-Site Topology

As indicated in Figure 2-6, end-to-end communication between sites in an ACI Multi-Site design requires the use of an intersite network (ISN). An ACI Multi-Site ISN faces less stringent requirements compared to ACI Multi-Pod IPNs. In an ISN, end-to-end IP connectivity between spines across sites, OSPF on the last-hop routers connecting to the spines, and increased MTU support allowing VXLAN-in-IP encapsulation are all still required. However, ACI Multi-Site does not dictate any cross-site latency requirements, nor does it require support for multicast or DHCP relay within the ISN.

ACI Multi-Site does not impose multicast requirements on the ISN because ACI Multi-Site has been designed to accommodate larger-scale ACI deployments that may span the globe. It is not always feasible or expected for a company that has a global data center footprint to also have a multicast backbone spanning the globe and between all data centers.

Due to the introduction of new functionalities that were not required in earlier ACI fabrics, Cisco introduced a second generation of spine hardware. Each ACI fabric within an ACI Multi-Site design requires at least one second-generation or newer piece of spine hardware for the following reasons:

Ingress replication of BUM traffic: To accommodate BUM traffic forwarding between ACI fabrics without the need to support multicast in the ISN, Multi-Site-enabled spines perform ingress replication of BUM traffic. This function is supported only on second-generation spine hardware.

Cross-fabric namespace normalization: Each ACI fabric has an independent APIC cluster and therefore an independent brain. When policies and parameters are communicated between fabrics in VXLAN header information, spines receiving cross-site traffic need to have a way to swap remotely significant parameters, such as VXLAN network identifiers (VNIDs), with equivalent values for the local site. This function, which is handled in hardware and is called namespace normalization, requires second-generation or newer spines.

Note that in contrast to ACI Multi-Site, ACI Multi-Pod can be deployed using first-generation spine switches.

For ACI Multi-Site deployments, current verified scalability limits published by Cisco suggest that fabrics with stretched policy requirements that have up to 200 leaf switches can be safely incorporated into ACI Multi-Site. A single ACI Multi-Site deployment can incorporate up to 12 fabrics as long as the total number of leaf switches in the deployment does not surpass 1600.

Each fabric in an ACI Multi-Site design forms a separate network failure domain and a separate availability zone.

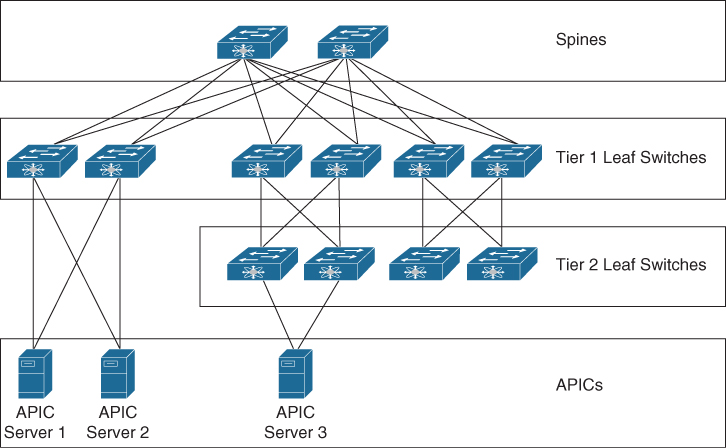

ACI Multi-Tier Architecture

Introduced in Release 4.1, ACI Multi-Tier provides the capability for vertical expansion of an ACI fabric by adding an extra layer or tier of leaf switches below the standard ACI leaf layer.

With the Multi-Tier enhancement, the standard ACI leaf layer can also be termed the Tier 1 leaf layer. The new layer of leaf switches that are added to vertically expand the fabric is called the Tier 2 leaf layer. Figure 2-7 shows these tiers. APICs, as indicated, can attach to either Tier 1 or Tier 2 leaf switches.

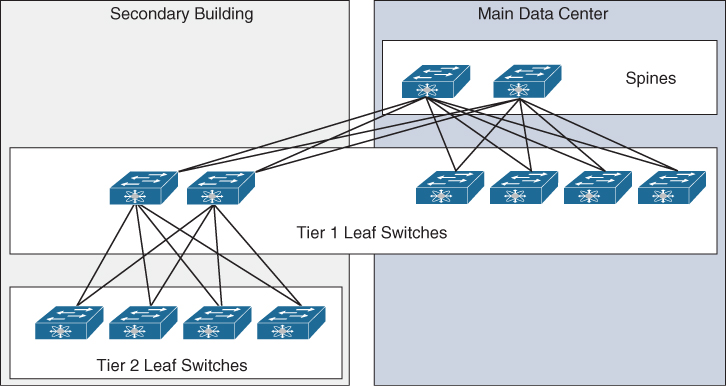

Figure 2-7 ACI Multi-Tier Topology

An example of a use case for ACI Multi-Tier is the extension of an ACI fabric across data center halls or across buildings that are in relatively close proximity while minimizing long-distance cabling and optics requirements. Examine the diagram in Figure 2-8. Suppose that an enterprise data center has workloads in an alternate building. In this case, the company can deploy a pair of Tier 1 leaf switches in the new building and expand the ACI fabric to the extent needed within the building by using a Tier 2 leaf layer. Assuming that 6 leaf switches would have been required to accommodate the port requirements in the building, as Figure 2-8 suggests, directly cabling these 6 leaf switches to the spines as Tier 1 leaf switches would have necessitated 12 cross-building cables. However, the use of an ACI Multi-Tier design enables the deployment of the same number of switches using 4 long-distance cable runs.

ACI Multi-Tier can also be an effective solution for use within data centers in which the cable management strategy is to minimize inter-row cabling and relatively low-bandwidth requirements exist for top-of-rack switches. In such a scenario, Tier 1 leaf switches can be deployed end-of-row, and Tier 2 leaf switches can be deployed top-of-rack.

Figure 2-8 Extending an ACI Fabric by Using ACI Multi-Tier in an Alternative Location

Not all ACI switch platforms support Multi-Tier functionality.

Remote Leaf Topology

For remote sites in which data center endpoints may be deployed but their number and significance do not justify the deployment of an entirely new fabric or pod, the ACI Remote Leaf solution can be used to extend connectivity and ensure consistent policies between the main data center and the remote site. With such a solution, leaf switches housed at the remote site communicate with spines and APICs at the main data center over a generic IPN. Each Remote Leaf switch can be bound to a single pod.

There are three main use cases for Remote Leaf deployments:

Satellite/small colo data centers: If a company has a small data center consisting of several top-of-rack switches and the data center may already have dependencies on a main data center, this satellite data center can be integrated into the main data center by using the Remote Leaf solution.

Data center extension and migrations: Cross-data center migrations that have traditionally been done through Layer 2 extension can instead be performed by deploying a pair of Remote Leafs in the legacy data center. This approach often has cost benefits compared to alternative Layer 2 extension solutions if there is already an ACI fabric in the target state data center.

Telco 5G distributed data centers: Telcom operators that are transitioning to more distributed mini data centers to bring services closer to customers but still desire centralized management and consistent policy deployment across sites can leverage Remote Leaf for these mini data centers.

In addition to these three main use cases, disaster recovery (DR) is sometimes considered a use case for Remote Leaf deployments, even though DR is a use case more closely aligned with ACI Multi-Site designs.

In a Remote Leaf solution, the APICs at the main data center deploy policy to the Remote Leaf switches as if they were locally connected.

Figure 2-9 illustrates a Remote Leaf solution.

Figure 2-9 Remote Leaf Topology and IPN Requirements

IPN requirements for a Remote Leaf solution are as follows:

MTU: The solution must support an end-to-end MTU that is at least 100 bytes higher than that of the endpoint source traffic. Assuming that 1500 bytes has been configured for data plane MTU, Remote Leaf can be deployed using a minimum MTU of 1600 bytes. An IPN MTU this low, however, necessitates that ACI administrators lower the ACI fabricwide control plane MTU, which is 9000 bytes by default.

Latency: Up to 300 milliseconds latency between the main data center and remote location is acceptable.

Bandwidth: Remote Leaf is supported with a minimum IPN bandwidth of 100 Mbps.

VTEP reachability: A Remote Leaf switch logically associates with a single pod if integrated into a Multi-Pod solution. To make this association possible, the Remote Leaf should be able to route traffic over the IPN to the VTEP pool of the associated pod. Use of a dedicated VRF for IPN traffic is recommended where feasible.

APIC infra IP reachability: A Remote Leaf switch needs IP connectivity with all APICs in a Multi-Pod cluster at the main data center. If an APIC has assigned itself IP addresses from a VTEP range different than the pod VTEP pool, the additional VTEP addresses need to also be advertised over the IPN.

OSPF support on upstream routers: Routers northbound of both the Remote Leaf switches and the spine switches need to support OSPF and must be able to encapsulate traffic destined to directly attached ACI switches using VLAN 4. This requirement exists only for directly connected devices and does not extend end-to-end in the IPN.

DHCP relay: The upstream router directly connected to Remote Leaf switches needs to enable DHCP relay to relay DHCP packets to the APIC IP addresses in the infra tenant. The DHCP relay configuration needs to be applied on the VLAN 4 subinterface or SVI.

Note that unlike a Multi-Pod IPN, a Remote Leaf IPN does not require Multicast PIM-Bidir support. This is because the Remote Leaf solution uses headend replication (HER) tunnels to forward BUM traffic between sites.

In a Remote Leaf design, traffic between known local endpoints at the remote site is switched directly, whether physically or virtually. Any traffic whose destination is in ACI but is unknown or not local to the remote site is forwarded to the main data center spines.

Not all ACI switches support Remote Leaf functionality. The current maximum verified scalability number for Remote Leaf switches is 100 per fabric.

APIC Clusters

The ultimate size of an APIC cluster should be directly proportionate to the size of the Cisco ACI deployment. From a management perspective, any active APIC controller in a cluster can service any user for any operation. Controllers can be transparently added to or removed from a cluster.

APICs can be purchased either as physical or virtual appliances. Physical APICs are 1 rack unit (RU) Cisco C-Series servers with ACI code installed and come in two different sizes: M for medium and L for large. In the context of APICs, “size” refers to the scale of the fabric and the number of endpoints. Virtual APICs are used in ACI mini deployments, which consist of fabrics with up to two spine switches and four leaf switches.

As hardware improves, Cisco releases new generations of APICs with updated specifications. At the time of this writing, Cisco has released three generations of APICs. The first generation of APICs (M1/L1) shipped as Cisco UCS C220 M3 servers. Second-generation APICs (M2/L2) were Cisco UCS C220 M4 servers. Third-generation APICs (M3/L3) are shipping as UCS C220 M5 servers.

Table 2-2 details specifications for current M3 and L3 APICs.

Table 2-2 M3 and L3 APIC Specifications

Component |

M3 |

L3 |

|---|---|---|

Processor |

2x 1.7 GHz Xeon scalable 3106/85W 8C/11MB cache/DDR4 2133MHz |

2x 2.1 GHz Xeon scalable 4110/85W 8C/11MB cache/DDR4 2400MHz |

Memory |

6x 16 GB DDR4-2666-MHz RDIMM/PC4-21300/single rank/x4/1.2v |

12x 16 GB DDR4-2666-MHz RDIMM/PC4-21300/single rank/x4/1.2v |

Hard drive |

2x 1 TB 12G SAS 7.2K RPM SFF HDD |

2x 2.4 TB 12G SAS 10K RPM SFF HDD (4K) |

Network cards |

1x Cisco UCS VIC 1455 Quad Port 10/25G SFP28 CNA PCIE |

1x Cisco UCS VIC 1455 Quad Port 10/25G SFP28 CNA PCIE |

Note in Table 2-2 that the only differences between M3 and L3 APICs are the sizes of their CPUs, memory, and hard drives. This is because fabric growth necessitates that increased transaction rates be supported, which drives up compute requirements.

Table 2-3 shows the hardware requirements for virtual APICs.

Table 2-3 Virtual APIC Specifications

Component |

Virtual APIC |

|---|---|

Processor |

8 vCPUs |

Memory |

32 GB |

Hard drive* |

300 GB HDD 100 GB SSD |

Supported ESXi hypervisor version |

6.5 or above |

* A VM is deployed with two HDDs.

APIC Cluster Scalability and Sizing

APIC cluster hardware is typically purchased from Cisco in the form of a bundle. An APIC bundle is a collection of one or more physical or virtual APICs, and the bundle that needs to be purchased depends on the desired target state scalability of the ACI fabric.

Table 2-4 shows currently available APIC cluster hardware options and the general scalability each bundle can individually achieve.

Table 2-4 APIC Hardware Bundles

Part Number |

Number of APICs |

General Scalability |

|---|---|---|

APIC-CLUSTER-XS (ACI mini bundle) |

1 M3 APIC, 2 virtual APICs, and 2 Nexus 9332C spine switches |

Up to 2 spines and 4 leaf switches |

APIC-CLUSTER-M3 |

3 M3 APICs |

Up to 1200 edge ports |

APIC-CLUSTER-L3 |

3 L3 APICs |

More than 1200 edge ports |

APIC-CLUSTER-XS specifically addresses ACI mini fabrics. ACI mini is a fabric deployed using two Nexus 9332C spine switches and up to four leaf switches. ACI mini is suitable for lab deployments, small colocation deployments, and deployments that are not expected to span beyond four leaf switches.

APIC-CLUSTER-M3 is designed for medium-sized deployments where the number of server ports connecting to ACI is not expected to exceed 1200, which roughly translates to 24 leaf switches.

APIC-CLUSTER-L3 is a bundle designed for large-scale deployments where the number of server ports connecting to ACI exceeds or will eventually exceed 1200.

Beyond bundles, Cisco allows customers to purchase individual APICs for the purpose of expanding an APIC cluster to enable further scaling of a fabric. Once a fabric expands beyond 1200 edge ports, ACI Verified Scalability Guides should be referenced to determine the optimal number of APICs for the fabric.

According to Verified Scalability Guides for ACI Release 4.1(1), an APIC cluster of three L3 APICs should suffice in deployments with up to 80 leaf switches. However, the cluster size would need to be expanded to four or more APICs to allow a fabric to scale up to 200 leaf switches.

Each APIC cluster houses a distributed multi-active database in which processes are active on all nodes. Data, however, is distributed or sliced across APICs via a process called database sharding. Sharding is a result of the evolution of what is called horizontal partitioning of databases and involves distributing a database across multiple instances of the schema. Sharding increases both redundancy and performance because a large partitioned table can be split across multiple database servers. It also enables a scale-out model involving adding to the number of servers as opposed to having to constantly scale up servers through hardware upgrades.

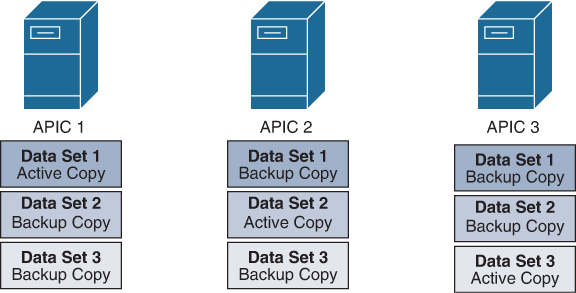

ACI shards each attribute within the APIC database to three nodes. A single APIC out of the three is considered active (the leader) for a given attribute at all times. If the APIC that houses the active copy of a particular slice or partition of data fails, the APIC cluster is able to recover via the two backup copies of the data residing on the other APICs. This is why the deployment of a minimum of three APICs is advised. Any APIC cluster deployed with fewer than three APICs is deemed unsuitable for production uses. Note that only the APIC that has been elected leader for a given attribute can modify the attribute.

Figure 2-10 provides a conceptual view of data sharding across a three-APIC cluster. For each data set or attribute depicted, a single APIC is elected leader. Assume that the active copy indicates that the APIC holding the active copy is leader for the given attribute.

Figure 2-10 Data Sharding Across Three APICs

For a portion of a database to allow writes (configuration changes), a quorum of APICs housing the pertinent database attributes undergoing a write operation must be healthy and online. Because each attribute in an APIC database is sharded into three copies, a quorum is defined as two copies. If two nodes in a three-node APIC cluster were to fail simultaneously, the remaining APIC would move the entire database into a read-only state, and no configuration changes would be allowed until the quorum was restored.

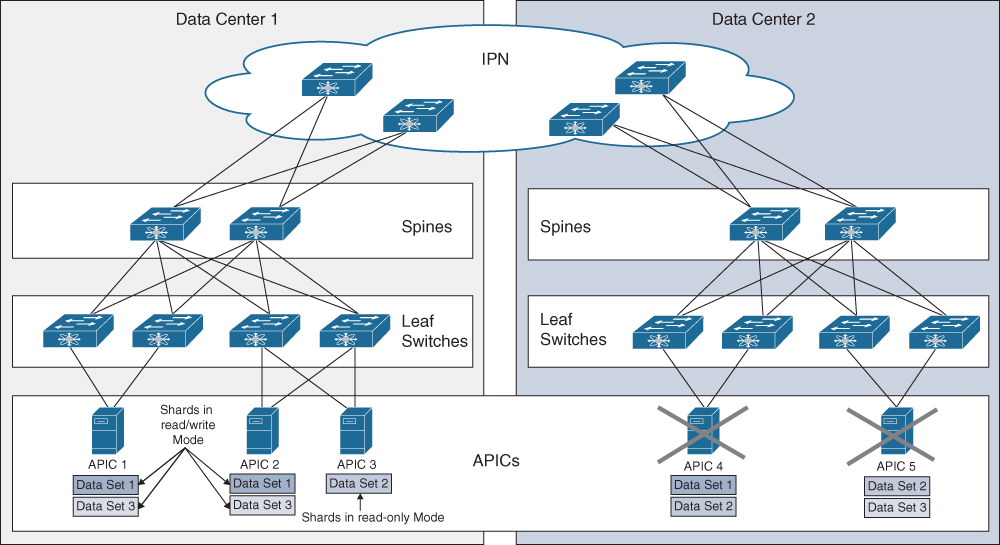

When an APIC cluster scales to five or seven APICs, the sharding process remains unchanged. In other words, the number of shards of a particular subset of data does not increase past three, but the cluster further distributes the shards. This means that cluster expansion past three APICs does not increase the redundancy of the overall APIC database.

Figure 2-11 illustrates how an outage of Data Center 2, which results in the failure of two APICs, could result in portions of the APIC database moving into a read-only state. In this case, the operational APICs have at least two shards for Data Sets 1 and 3, so administrators can continue to make configuration changes involving these database attributes. However, Data Set 2 is now in read-only mode because two replicas of the attribute in question have been lost.

As Figure 2-11 demonstrates, increasing APIC cluster size to five or seven does not necessarily increase the redundancy of the overall cluster.

A general recommendation in determining APIC cluster sizes is to deploy three APICs in fabrics scaling up to 80 leaf switches. If recoverability is a concern, a standby APIC can be added to the deployment. A total of five or seven APICs should be deployed for scalability purposes in fabrics expanding beyond 80 leaf switches.

If, for any reason, a fabric with more than three APICs is bifurcated, the APIC cluster attempts to recover this split-brain event. Once connectivity across all APICs is restored, automatic reconciliation takes place within the cluster, based on timestamps.

Figure 2-11 Impact of APIC Failures in a Five-Node Cluster

What would happen if Data Center 1 in Figure 2-11 failed instead of Data Center 2, and all shards for a specific subset of data resided in Data Center 1 at the time of the outage? In such a scenario, the failure of three APICs could lead to the hypothetical loss of all three shards of a specific subset of data. To ensure that a total loss of a given pod does not result in the loss of all shards for a given attribute, Cisco recommends that no more than two APICs be placed in a single pod.

Spine Hardware

Cisco ACI spine hardware options includes Nexus 9300 Series fixed form factor switches as well as Nexus 9500 modular switches. Not all switches in the noted switch families can be deployed in ACI mode.

The primary factors that guide spine purchasing decisions are desired port bandwidths, feature requirements, hardware generation, and the required number of target state ports.

Whereas a fixed spine switch has a limited number of ports, a port in a modular platform can scale with the addition of more line cards to a chassis. For this reason, modular chassis are more suitable for fabrics that require massive scale.

Fixed spine platforms satisfy the scalability requirements of small to medium fabrics without problem.

First-Generation Spine Switches

As noted earlier in this chapter, first-generation spine switches are not supported as spines interconnecting ACI fabrics in ACI Multi-Site deployments. Other new solutions, such as Remote Leaf and ACI Multi-Tier also require second-generation spine switches. Understanding first-generation spine platforms is, however, beneficial for historical purposes because a large number of ACI deployments still contain first-generation hardware.

First-generation ACI spine switch models on the market at the time of this writing have model numbers that end in PQ. Table 2-5 lists first-generation Nexus spine switches.

Table 2-5 First-Generation Spine Switches

Characteristic |

Nexus 9336PQ |

Nexus 9736PQ |

|---|---|---|

Form factor |

2 RU fixed switch |

Line card for modular chassis |

Supported modular platforms |

N/A |

Nexus 9504 Nexus 9508 Nexus 9516 |

40 Gigabit Ethernet ports |

36 ports |

36 ports |

100 Gigabit Ethernet ports |

N/A |

N/A |

ACI Multi-Pod support |

Yes |

Yes |

CloudSec support |

No |

No |

Remote Leaf support |

No |

No |

ACI Multi-Tier support |

No |

No |

ACI Multi-Site support |

No |

No |

Even though first-generation spine switches do not support namespace normalization or ingress replication of BUM traffic, they can coexist with second-generation spine switches within a fabric. This coexistence enables companies to integrate fabrics into ACI Multi-Site without having to decommission older spines before the regular hardware refresh cycle.

Second-Generation Spine Switches

In addition to providing support for ACI Multi-Site, Remote Leaf, and ACI Multi-Tier, second-generation spine switch ports operate at both 40 Gigabit Ethernet and 100 Gigabit Ethernet speeds and therefore enable dramatic fabric bandwidth upgrades.

Second-generation spine switches also support MACsec and CloudSec. MACsec enables port-to-port encryption of traffic in transit at line rate. CloudSec enables cross-site encryption at line rate, eliminating the need for intermediary devices to support or perform encryption. Cross-site encryption is also referred to as VTEP-to-VTEP encryption.

Second-generation ACI spine switch models on the market at the time of this writing have model numbers that end in C, EX, and FX. Table 2-6 provides additional details about second-generation spine platforms.

Table 2-6 Second-Generation Spine Switches

Characteristic |

Nexus 9364C |

Nexus 9332C |

Nexus 9732C-EX |

Nexus 9736C-FX |

|---|---|---|---|---|

Form factor |

2 RU fixed |

1 RU fixed |

Line card for modular chassis |

Line card for modular chassis |

Supported modular platforms |

N/A |

N/A |

Nexus 9504 Nexus 9508 Nexus 9516 |

Nexus 9504 Nexus 9508 Nexus 9516 |

40/100 Gigabit Ethernet ports |

64 |

32 |

32 |

36 |

ACI Multi-Pod support |

Yes |

Yes |

Yes |

Yes |

CloudSec support |

Last 16 ports |

Last 8 ports |

N/A |

All ports |

Remote Leaf support |

Yes |

Yes |

Yes |

Yes |

ACI Multi-Tier support |

Yes |

Yes |

Yes |

Yes |

ACI Multi-Site support |

Yes |

Yes |

Yes |

Yes |

In addition to the hardware listed in Table 2-6, Nexus 9732C-FX line cards will be supported as ACI spine line cards in the near future.

New spine switches with 100/400 Gigabit Ethernet ports are also on the horizon. The Nexus 9316D-GX is already available and is supported as an ACI spine. This platform is also in the roadmap for support as a leaf switch. The 100/400 Gigabit Ethernet Nexus 93600CD-GX switch, which is supported as an ACI leaf, is also in the roadmap for use as a spine.

Cisco uses the term cloud scale to refer to the newer Nexus switch models that contain the specialized ASICs needed for larger buffer sizes, larger endpoint tables, and visibility into packets and flows traversing the switch without impacting CPU utilization. Second-generation ACI spine switches fall into the category of cloud-scale switches.

Leaf Hardware

Cisco ACI leaf hardware options include Nexus 9300 Series fixed form factor switches. Not all switches in the noted switch families can be deployed in ACI mode.

The primary factors that guide leaf purchasing decisions are the desired port bandwidths, feature requirements, hardware generation, and the required number of target state ports.

First-Generation Leaf Switches

First-generation ACI leaf switches are Nexus 9300 Series platforms that are based on the Application Leaf Engine (ALE) ASICs.

The hardware resources that enable whitelisting of traffic are ternary content-addressable memory (TCAM) resources, referred to as the policy CAM.

Policy CAM sizes vary depending on the hardware. The policy CAM size and behavior limitations in first-generation switches tended to sometimes limit whitelisting projects.

There are also a number of other capability differences between first- and second-generation leaf hardware, such as handling of Layer 4 operations and multicast routing.

Second-Generation Leaf Switches

Second-generation ACI leaf switches are Nexus 9300 Series platforms that are based on cloud-scale ASICs. Second-generation leaf switches support Remote Leaf and ACI Multi-Tier, have significantly larger policy CAM sizes, and offer enhanced hardware capabilities and port speeds.

ACI leaf switches whose model numbers end in EX, FX, FX2, and FXP are considered second-generation leaf switches. Table 2-7 provides details about second-generation switches that have 1/10 Gigabit Ethernet copper port connectivity for servers.

Table 2-7 Second-Generation 1/10 Gigabit Ethernet Copper Leaf Switches

Characteristic |

Nexus 93108TC-EX |

Nexus 9348GC-FXP |

Nexus 93108TC-FX |

Nexus 93216TC-FX2 |

|---|---|---|---|---|

Form factor |

1 RU fixed |

1 RU fixed |

1 RU fixed |

2 RU fixed |

100 Mbps and1 Gigabit Ethernet copper ports |

N/A |

48 |

N/A |

N/A |

100 Mbps and 1/10 Gigabit Ethernet copper ports |

48 |

N/A |

48 |

96 |

10/25 Gigabit Ethernet ports |

N/A |

N/A |

4 |

N/A |

40/100 Gigabit Ethernet ports |

6 |

2 |

6 |

12 |

ACI Multi-Pod support |

Yes |

Yes |

Yes |

Yes |

Remote Leaf support |

Yes |

Yes |

Yes |

Yes |

Can be used as a Tier 1 leaf |

Yes |

Yes |

Yes |

Yes |

Can be used as a Tier 2 leaf |

Yes |

Yes |

Yes |

Yes |

The Nexus 9348GC-FXP switch has 48 ports, offering 100 Mbps or 1 Gigabit Ethernet connectivity. These ports have RJ-45 connections, eliminating the need for transceivers. Due to its low cost and support for cloud-scale features, the Nexus 9348GC-FXP is an ideal replacement for Fabric Extenders.

Table 2-8 details second-generation switches that provide 1/10/25 Gigabit Ethernet fiber port connectivity for servers.

Table 2-8 Second-Generation 1/10/25 Gigabit Ethernet Fiber Leaf Switches

Characteristic |

Nexus 93180YC-EX |

Nexus 93180YC-FX |

Nexus 93240YC-FX2 |

Nexus 93360YC-FX2 |

|---|---|---|---|---|

Form factor |

1 RU fixed |

1 RU fixed |

1.2 RU fixed |

2 RU fixed |

1/10/25 Gigabit Ethernet ports |

48 |

48 |

48 |

96 |

40/100 Gigabit Ethernet ports |

6 |

6 |

12 |

12 |

ACI Multi-Pod support |

Yes |

Yes |

Yes |

Yes |

Remote Leaf support |

Yes |

Yes |

Yes |

Yes |

Can be used as a Tier 1 leaf |

Yes |

Yes |

Yes |

Yes |

Can be used as a Tier 2 leaf |

Yes |

Yes |

Yes |

Yes |

Table 2-9 lists details on the only second-generation switch available at the time of writing that provides 40/100 Gigabit Ethernet connectivity for servers.

Table 2-9 Second-Generation 40/100 Gigabit Ethernet Leaf Switches

Characteristic |

Nexus 9336C-FX2 |

|---|---|

Form factor |

1 RU fixed |

40/100 Gigabit Ethernet ports |

36 |

ACI Multi-Pod support |

Yes |

Remote Leaf support |

Yes |

Can be used as a Tier 1 leaf |

Yes |

Can be used as a Tier 2 leaf |

Yes |

Exam Preparation Tasks

As mentioned in the section “How to Use This Book” in the Introduction, you have a couple of choices for exam preparation: Chapter 17, “Final Preparation,” and the exam simulation questions in the Pearson Test Prep Software Online.

Review All Key Topics

Review the most important topics in this chapter, noted with the Key Topic icon in the outer margin of the page. Table 2-10 lists these key topics and the page number on which each is found.

Table 2-10 Key Topics for Chapter 2

Key Topic Element |

Description |

Page Number |

|---|---|---|

List |

Describes APICs, spine switches, and leaf switches |

23 |

List |

Describes some functions engineers commonly evaluate when deciding whether to dedicate leaf switches to functions |

24 |

Paragraph |

Describes ACI Multi-Pod |

25 |

Paragraph |

Calls out requirements for an ACI Multi-Pod IPN |

25 |

Paragraph |

Describes ACI Multi-Site |

26 |

Paragraph |

Explains APIC cluster separation in ACI Multi-Site fabrics and MSO communication with each cluster |

27 |

Paragraph |

Calls out requirements for an ACI Multi-Site ISN |

27 |

Paragraph |

Explains why ACI Multi-Site requires the use of at least one Gen 2 spine in each site |

28 |

Paragraph |

Describes Remote Leaf |

30 |

Paragraph |

Explains the significance of sizes in APIC purchases and the relevance of M versus L models |

32 |

Paragraph |

Explains APIC hardware generations and correlation with UCS C-Series server generations |

32 |

Table 2-5 |

Lists first-generation spine switches |

37 |

Table 2-6 |

Lists second-generation spine switches |

38 |

Table 2-7 |

Lists second-generation 1/10 Gigabit Ethernet copper leaf switches |

39 |

Table 2-8 |

Lists second-generation 1/10/25 Gigabit Ethernet fiber leaf switches |

40 |

Table 2-9 |

Lists second-generation 40/100 Gigabit Ethernet leaf switches |

40 |

Define Key Terms

Define the following key terms from this chapter and check your answers in the glossary:

fabric port

border leaf

service leaf

compute leaf

IP storage leaf

stretched ACI fabric

transit leaf

ACI Multi-Pod

ACI Multi-Site

sharding